Learning Progress: 60%.

- https://mlr3book.mlr-org.com/

- 中文翻译由 ChatGPT 3.5 提供

Getting Started

1 Introduction and Overview

mlr3 by Example:

set.seed(123)

task = tsk("penguins")

split = partition(task)

learner = lrn("classif.rpart")

learner$train(task, row_ids = split$train)

learner$model

#> n= 231

#>

#> node), split, n, loss, yval, (yprob)

#> * denotes terminal node

#>

#> 1) root 231 129 Adelie (0.441558442 0.199134199 0.359307359)

#> 2) flipper_length< 206.5 144 44 Adelie (0.694444444 0.298611111 0.006944444)

#> 4) bill_length< 43.05 98 3 Adelie (0.969387755 0.030612245 0.000000000) *

#> 5) bill_length>=43.05 46 6 Chinstrap (0.108695652 0.869565217 0.021739130) *

#> 3) flipper_length>=206.5 87 5 Gentoo (0.022988506 0.034482759 0.942528736) *

prediction = learner$predict(task, row_ids = split$test)

prediction

#> <PredictionClassif> for 113 observations:

#> row_ids truth response

#> 1 Adelie Adelie

#> 2 Adelie Adelie

#> 3 Adelie Adelie

#> ---

#> 328 Chinstrap Chinstrap

#> 331 Chinstrap Adelie

#> 339 Chinstrap Chinstrap

prediction$score(msr("classif.acc"))

#> classif.acc

#> 0.9557522The mlr3 interface also lets you run more complicated experiments in just a few lines of code:

We use dictionaries to group large collections of relevant objects so they can be listed and retrieved easily. For example, you can see an overview of available learners (that are in loaded packages) and their properties with as.data.table(mlr_learners) or by calling the sugar function without any arguments, e.g. lrn().

我们使用字典来分组大量相关对象,以便可以轻松地列出和检索它们。例如,您可以通过

as.data.table(mlr_learners)查看可用学习器(位于加载的包中)及其属性的概述,或者通过调用糖函数而不带任何参数,例如lrn()。

as.data.table(mlr_learners)[1:3]

#> Key: <key>

#> key label task_type

#> <char> <char> <char>

#> 1: classif.cv_glmnet GLM with Elastic Net Regularization classif

#> 2: classif.debug Debug Learner for Classification classif

#> 3: classif.featureless Featureless Classification Learner classif

#> feature_types

#> <list>

#> 1: logical,integer,numeric

#> 2: logical,integer,numeric,character,factor,ordered

#> 3: logical,integer,numeric,character,factor,ordered,...

#> packages

#> <list>

#> 1: mlr3,mlr3learners,glmnet

#> 2: mlr3

#> 3: mlr3

#> properties

#> <list>

#> 1: multiclass,selected_features,twoclass,weights

#> 2: hotstart_forward,missings,multiclass,twoclass

#> 3: featureless,importance,missings,multiclass,selected_features,twoclass

#> predict_types

#> <list>

#> 1: response,prob

#> 2: response,prob

#> 3: response,probFundamentals

2 Data and Basic Modeling

2.1 Tasks

2.1.1 Constructing Tasks

mlr3 includes a few predefined machine learning tasks in the mlr_tasks Dictionary.

mlr_tasks

#> <DictionaryTask> with 21 stored values

#> Keys: ames_housing, bike_sharing, boston_housing, breast_cancer,

#> german_credit, ilpd, iris, kc_housing, moneyball, mtcars, optdigits,

#> penguins, penguins_simple, pima, ruspini, sonar, spam, titanic,

#> usarrests, wine, zoo

# the same as

# tsk()tsk_mtcars = tsk("mtcars")

tsk_mtcars

#> <TaskRegr:mtcars> (32 x 11): Motor Trends

#> * Target: mpg

#> * Properties: -

#> * Features (10):

#> - dbl (10): am, carb, cyl, disp, drat, gear, hp, qsec, vs, wt# create my own regression task

data("mtcars", package = "datasets")

mtcars_subset = subset(mtcars, select = c("mpg", "cyl", "disp"))

tsk_mtcars = as_task_regr(mtcars_subset, target = "mpg", id = "cars")

tsk_mtcars

#> <TaskRegr:cars> (32 x 3)

#> * Target: mpg

#> * Properties: -

#> * Features (2):

#> - dbl (2): cyl, dispThe id argument is optional and specifies an identifier for the task that is used in plots and summaries; if omitted the variable name of the data will be used as the id.

2.1.2 Retrieving Data

c(tsk_mtcars$nrow, tsk_mtcars$ncol)

#> [1] 32 3c(Features = tsk_mtcars$feature_names,

Target = tsk_mtcars$target_names)

#> Features1 Features2 Target

#> "cyl" "disp" "mpg"Row IDs are not used as features when training or predicting but are metadata that allow access to individual observations. Note that row IDs are not the same as row numbers.

This design decision allows tasks and learners to transparently operate on real database management systems, where primary keys are required to be unique, but not necessarily consecutive.

行ID在训练或预测时不作为特征使用,而是元数据,用于访问个别观测数据。需要注意的是,行ID与行号不同。

这种设计决策使得任务和学习器能够透明地在真实的数据库管理系统上运行,其中要求主键是唯一的,但不一定连续。

task = as_task_regr(data.frame(x = runif(5), y = runif(5)),

target = "y")

task$row_ids

#> [1] 1 2 3 4 5

task$filter(c(4, 1, 3))

task$row_ids

#> [1] 1 3 4tsk_mtcars$data()[1:3]

#> mpg cyl disp

#> <num> <num> <num>

#> 1: 21.0 6 160

#> 2: 21.0 6 160

#> 3: 22.8 4 108

tsk_mtcars$data(rows = c(1, 5, 10), cols = tsk_mtcars$feature_names)

#> cyl disp

#> <num> <num>

#> 1: 6 160.0

#> 2: 8 360.0

#> 3: 6 167.62.1.3 Task Mutators

tsk_mtcars_small = tsk("mtcars")

tsk_mtcars_small$select("cyl")

tsk_mtcars_small$filter(2:3)

tsk_mtcars_small$data()

#> mpg cyl

#> <num> <num>

#> 1: 21.0 6

#> 2: 22.8 4As R6 uses reference semantics, you need to use $clone() if you want to modify a task while keeping the original object intact.

tsk_mtcars = tsk("mtcars")

tsk_mtcars_clone = tsk_mtcars$clone()

tsk_mtcars_clone$filter(1:2)

tsk_mtcars_clone$head()

#> mpg am carb cyl disp drat gear hp qsec vs wt

#> <num> <num> <num> <num> <num> <num> <num> <num> <num> <num> <num>

#> 1: 21 1 4 6 160 3.9 4 110 16.46 0 2.620

#> 2: 21 1 4 6 160 3.9 4 110 17.02 0 2.875To add extra rows and columns to a task, you can use $rbind() and $cbind() respectively:

tsk_mtcars_small

#> <TaskRegr:mtcars> (2 x 2): Motor Trends

#> * Target: mpg

#> * Properties: -

#> * Features (1):

#> - dbl (1): cyl

tsk_mtcars_small$cbind(data.frame(disp = c(150, 160)))

tsk_mtcars_small$rbind(data.frame(mpg = 23, cyl = 5, disp = 170))

tsk_mtcars_small$data()

#> mpg cyl disp

#> <num> <num> <num>

#> 1: 21.0 6 150

#> 2: 22.8 4 160

#> 3: 23.0 5 1702.2 Learners

# all the learners available in mlr3

mlr_learners

#> <DictionaryLearner> with 46 stored values

#> Keys: classif.cv_glmnet, classif.debug, classif.featureless,

#> classif.glmnet, classif.kknn, classif.lda, classif.log_reg,

#> classif.multinom, classif.naive_bayes, classif.nnet, classif.qda,

#> classif.ranger, classif.rpart, classif.svm, classif.xgboost,

#> clust.agnes, clust.ap, clust.cmeans, clust.cobweb, clust.dbscan,

#> clust.diana, clust.em, clust.fanny, clust.featureless, clust.ff,

#> clust.hclust, clust.kkmeans, clust.kmeans, clust.MBatchKMeans,

#> clust.mclust, clust.meanshift, clust.pam, clust.SimpleKMeans,

#> clust.xmeans, regr.cv_glmnet, regr.debug, regr.featureless,

#> regr.glmnet, regr.kknn, regr.km, regr.lm, regr.nnet, regr.ranger,

#> regr.rpart, regr.svm, regr.xgboost

# lrns()lrn("regr.rpart")

#> <LearnerRegrRpart:regr.rpart>: Regression Tree

#> * Model: -

#> * Parameters: xval=0

#> * Packages: mlr3, rpart

#> * Predict Types: [response]

#> * Feature Types: logical, integer, numeric, factor, ordered

#> * Properties: importance, missings, selected_features, weightsAll Learner objects include the following metadata, which can be seen in the output above:

$feature_types: the type of features the learner can handle.$packages: the packages required to be installed to use the learner.$properties: the properties of the learner. For example, the “missings” properties means a model can handle missing data, and “importance” means it can compute the relative importance of each feature.$predict_types: the types of prediction that the model can make.$param_set: the set of available hyperparameters.

2.2.1 Training

After training, the fitted model is stored in the $model field for future inspection and prediction:

lrn_rpart$model

#> n= 32

#>

#> node), split, n, deviance, yval

#> * denotes terminal node

#>

#> 1) root 32 1126.04700 20.09062

#> 2) cyl>=5 21 198.47240 16.64762

#> 4) hp>=192.5 7 28.82857 13.41429 *

#> 5) hp< 192.5 14 59.87214 18.26429 *

#> 3) cyl< 5 11 203.38550 26.66364 *

splits = partition(tsk_mtcars)

splits

#> $train

#> [1] 1 2 3 4 5 21 25 27 32 7 13 15 16 17 22 23 29 31 18 26 28

#>

#> $test

#> [1] 8 9 10 30 6 11 12 14 24 19 20

lrn_rpart$train(tsk_mtcars, row_ids = splits$train)2.2.2 Predicting

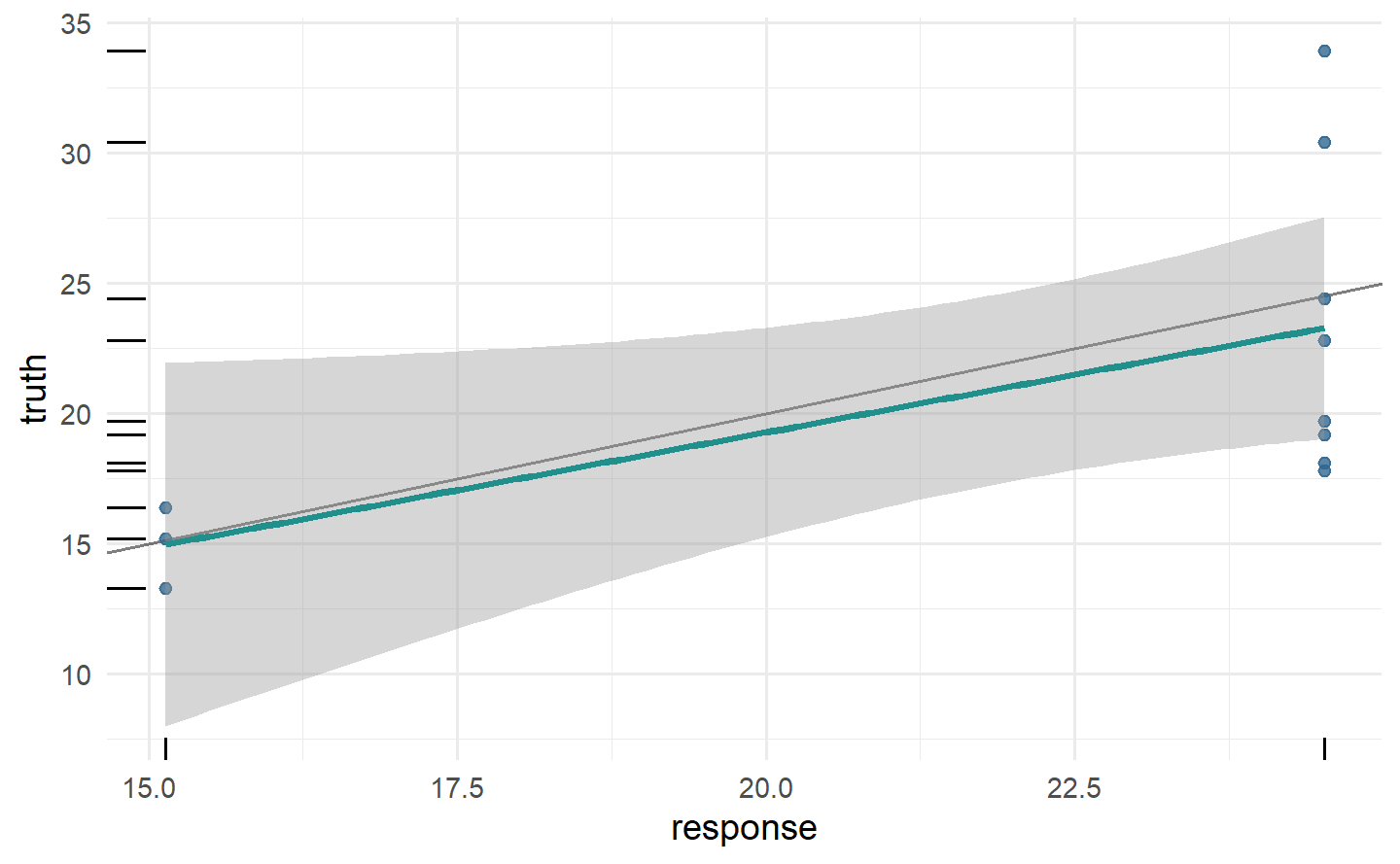

prediction = lrn_rpart$predict(tsk_mtcars, row_ids = splits$test)

prediction

#> <PredictionRegr> for 11 observations:

#> row_ids truth response

#> 8 24.4 24.52000

#> 9 22.8 24.52000

#> 10 19.2 24.52000

#> ---

#> 24 13.3 15.13636

#> 19 30.4 24.52000

#> 20 33.9 24.52000

autoplot(prediction)

mtcars_new = data.table(cyl = c(5, 6), disp = c(100, 120),

hp = c(100, 150), drat = c(4, 3.9), wt = c(3.8, 4.1),

qsec = c(18, 19.5), vs = c(1, 0), am = c(1, 1),

gear = c(6, 4), carb = c(3, 5))

prediction = lrn_rpart$predict_newdata(mtcars_new)

prediction

#> <PredictionRegr> for 2 observations:

#> row_ids truth response

#> 1 NA 24.52

#> 2 NA 24.522.2.3 Hyperparameters

lrn_rpart$param_set

#> <ParamSet>

#> id class lower upper nlevels

#> <char> <char> <num> <num> <num>

#> 1: cp ParamDbl 0 1 Inf

#> 2: keep_model ParamLgl NA NA 2

#> 3: maxcompete ParamInt 0 Inf Inf

#> 4: maxdepth ParamInt 1 30 30

#> 5: maxsurrogate ParamInt 0 Inf Inf

#> 6: minbucket ParamInt 1 Inf Inf

#> 7: minsplit ParamInt 1 Inf Inf

#> 8: surrogatestyle ParamInt 0 1 2

#> 9: usesurrogate ParamInt 0 2 3

#> 10: xval ParamInt 0 Inf Inf

#> default

#> <list>

#> 1: 0.01

#> 2: FALSE

#> 3: 4

#> 4: 30

#> 5: 5

#> 6: <NoDefault>\n Public:\n clone: function (deep = FALSE) \n initialize: function ()

#> 7: 20

#> 8: 0

#> 9: 2

#> 10: 10

#> value

#> <list>

#> 1:

#> 2:

#> 3:

#> 4:

#> 5:

#> 6:

#> 7:

#> 8:

#> 9:

#> 10: 0# change hyperparameter

lrn_rpart = lrn("regr.rpart", maxdepth = 1)

lrn_rpart$param_set$values

#> $xval

#> [1] 0

#>

#> $maxdepth

#> [1] 1# learned regression tree

lrn_rpart$train(tsk("mtcars"))$model

#> n= 32

#>

#> node), split, n, deviance, yval

#> * denotes terminal node

#>

#> 1) root 32 1126.0470 20.09062

#> 2) cyl>=5 21 198.4724 16.64762 *

#> 3) cyl< 5 11 203.3855 26.66364 *# another way to update hyperparameters

lrn_rpart$param_set$values$maxdepth = 2

lrn_rpart$param_set$values

#> $xval

#> [1] 0

#>

#> $maxdepth

#> [1] 2

# now with depth 2

lrn_rpart$train(tsk("mtcars"))$model

#> n= 32

#>

#> node), split, n, deviance, yval

#> * denotes terminal node

#>

#> 1) root 32 1126.04700 20.09062

#> 2) cyl>=5 21 198.47240 16.64762

#> 4) hp>=192.5 7 28.82857 13.41429 *

#> 5) hp< 192.5 14 59.87214 18.26429 *

#> 3) cyl< 5 11 203.38550 26.66364 *# or with set_values()

lrn_rpart$param_set$set_values(xval = 2, cp = .5)

lrn_rpart$param_set$values

#> $xval

#> [1] 2

#>

#> $maxdepth

#> [1] 2

#>

#> $cp

#> [1] 0.52.2.4 Baseline Learners

Baselines are useful in model comparison and as fallback learners. For regression, we have implemented the baseline lrn("regr.featureless"), which always predicts new values to be the mean (or median, if the robust hyperparameter is set to TRUE) of the target in the training data:

基线在模型比较和作为备用学习器中非常有用。对于回归问题,我们已经实现了名为 lrn("regr.featureless") 的基线,它总是预测新值为训练数据中目标的均值(如果鲁棒性参数设置为 TRUE,则为中位数):

task = as_task_regr(data.frame(x = runif(1000), y = rnorm(1000, 2, 1)),

target = "y")

lrn("regr.featureless")$train(task, 1:995)$predict(task, 996:1000)

#> <PredictionRegr> for 5 observations:

#> row_ids truth response

#> 996 1.484589 2.034983

#> 997 3.012537 2.034983

#> 998 1.964060 2.034983

#> 999 1.332658 2.034983

#> 1000 2.923380 2.034983It is good practice to test all new models against a baseline, and also to include baselines in experiments with multiple other models. In general, a model that does not outperform a baseline is a ‘bad’ model, on the other hand, a model is not necessarily ‘good’ if it outperforms the baseline.

在实践中,对所有新模型进行与基线的测试是一个良好的做法,同时在与多个其他模型进行实验时也要包括基线。通常情况下,如果一个模型无法超越基线,那么它可以被视为是一个不好的模型;另一方面,如果一个模型超越了基线,也不一定就是一个好模型。

2.3 Evaluation

2.3.1 Measures

as.data.table(msr())[1:3]

#> Key: <key>

#> key label task_type packages

#> <char> <char> <char> <list>

#> 1: aic Akaike Information Criterion <NA> mlr3

#> 2: bic Bayesian Information Criterion <NA> mlr3

#> 3: classif.acc Classification Accuracy classif mlr3,mlr3measures

#> predict_type task_properties

#> <char> <list>

#> 1: <NA>

#> 2: <NA>

#> 3: responsemeasure = msr("regr.mae")

measure

#> <MeasureRegrSimple:regr.mae>: Mean Absolute Error

#> * Packages: mlr3, mlr3measures

#> * Range: [0, Inf]

#> * Minimize: TRUE

#> * Average: macro

#> * Parameters: list()

#> * Properties: -

#> * Predict type: response2.3.2 Scoring Predictions

Note that all task types have default measures that are used if the argument to $score() is omitted, for regression this is the mean squared error (msr("regr.mse")).

2.3.3 Technical Measures

mlr3 also provides measures that do not quantify the quality of the predictions of a model, but instead provide ‘meta’-information about the model. These include:

msr("time_train"): The time taken to train a model.msr("time_predict"): The time taken for the model to make predictions.msr("time_both"): The total time taken to train the model and then make predictions.msr("selected_features"): The number of features selected by a model, which can only be used if the model has the “selected_features” property.

These can be used after model training and predicting because we automatically store model run times whenever $train() and $predict() are called, so the measures above are equivalent to:

The selected_features measure calculates how many features were used in the fitted model.

msr_sf = msr("selected_features")

msr_sf

#> <MeasureSelectedFeatures:selected_features>: Absolute or Relative Frequency of Selected Features

#> * Packages: mlr3

#> * Range: [0, Inf]

#> * Minimize: TRUE

#> * Average: macro

#> * Parameters: normalize=FALSE

#> * Properties: requires_task, requires_learner, requires_model

#> * Predict type: NA# accessed hyperparameters with `$param_set`

msr_sf$param_set

#> <ParamSet>

#> id class lower upper nlevels

#> <char> <char> <num> <num> <int>

#> 1: normalize ParamLgl NA NA 2

#> default

#> <list>

#> 1: <NoDefault>\n Public:\n clone: function (deep = FALSE) \n initialize: function ()

#> value

#> <list>

#> 1: FALSEmsr_sf$param_set$values$normalize = TRUE

prediction$score(msr_sf, task = tsk_mtcars, learner = lrn_rpart)

#> selected_features

#> 0.1Note that we passed the task and learner as the measure has the requires_task and requires_learner properties.

2.4 Our First Regression Experiment

We have now seen how to train a model, make predictions and score them. What we have not yet attempted is to ascertain if our predictions are any ‘good’. So before look at how the building blocks of mlr3 extend to classification, we will take a brief pause to put together everything above in a short experiment to assess the quality of our predictions. We will do this by comparing the performance of a featureless regression learner to a decision tree with changed hyperparameters.

我们已经了解了如何训练模型、进行预测并对其进行评分。但是,我们尚未尝试确定我们的预测是否“好”。因此,在深入研究

mlr3的构建模块如何扩展到分类之前,我们将简要停顿一下,通过一个简短的实验来评估我们预测的质量。我们将通过比较无特征的回归学习器与更改超参数的决策树的性能来进行评估。

set.seed(349)

tsk_mtcars = tsk("mtcars")

splits = partition(tsk_mtcars)

lrn_featureless = lrn("regr.featureless")

lrn_rpart = lrn("regr.rpart", cp = .2, maxdepth = 5)

measures = msrs(c("regr.mse", "regr.mae"))

# train learners

lrn_featureless$train(tsk_mtcars, splits$train)

lrn_rpart$train(tsk_mtcars, splits$train)

# make and score predictions

lrn_featureless$predict(tsk_mtcars, splits$test)$score(measures)

#> regr.mse regr.mae

#> 26.726772 4.512987

lrn_rpart$predict(tsk_mtcars, splits$test)$score(measures)

#> regr.mse regr.mae

#> 6.932709 2.2064942.5 Classification

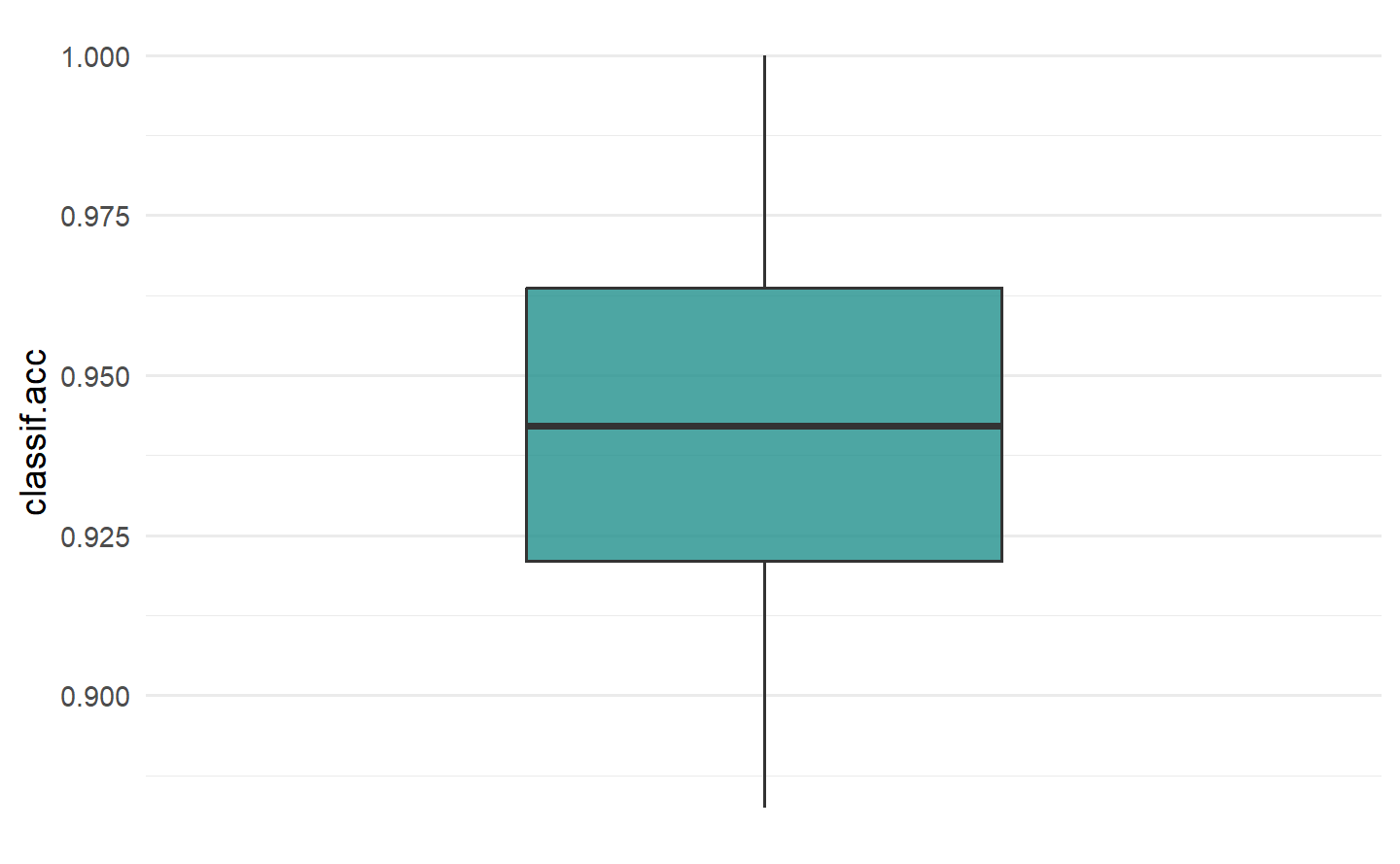

2.5.1 Our First Classification Experiment

set.seed(349)

tsk_penguins = tsk("penguins")

splits = partition(tsk_penguins)

lrn_featureless = lrn("classif.featureless")

lrn_rpart = lrn("classif.rpart", cp = .2, maxdepth = 5)

measure = msr("classif.acc")

# train learners

lrn_featureless$train(tsk_penguins, splits$train)

lrn_rpart$train(tsk_penguins, splits$train)

# make and score predictions

lrn_featureless$predict(tsk_penguins, splits$test)$score(measure)

#> classif.acc

#> 0.4424779

lrn_rpart$predict(tsk_penguins, splits$test)$score(measure)

#> classif.acc

#> 0.94690272.5.2 TaskClassif

as.data.table(tsks())[task_type == "classif"]

#> Key: <key>

#> key label task_type nrow

#> <char> <char> <char> <int>

#> 1: breast_cancer Wisconsin Breast Cancer classif 683

#> 2: german_credit German Credit classif 1000

#> 3: ilpd Indian Liver Patient Data classif 583

#> 4: iris Iris Flowers classif 150

#> 5: optdigits Optical Recognition of Handwritten Digits classif 5620

#> 6: penguins Palmer Penguins classif 344

#> 7: penguins_simple Simplified Palmer Penguins classif 333

#> 8: pima Pima Indian Diabetes classif 768

#> 9: sonar Sonar: Mines vs. Rocks classif 208

#> 10: spam HP Spam Detection classif 4601

#> 11: titanic Titanic classif 1309

#> 12: wine Wine Regions classif 178

#> 13: zoo Zoo Animals classif 101

#> ncol properties lgl int dbl chr fct ord pxc

#> <int> <list> <int> <int> <int> <int> <int> <int> <int>

#> 1: 10 twoclass 0 0 0 0 0 9 0

#> 2: 21 twoclass 0 3 0 0 14 3 0

#> 3: 11 twoclass 0 4 5 0 1 0 0

#> 4: 5 multiclass 0 0 4 0 0 0 0

#> 5: 65 twoclass 0 64 0 0 0 0 0

#> 6: 8 multiclass 0 3 2 0 2 0 0

#> 7: 11 multiclass 0 3 7 0 0 0 0

#> 8: 9 twoclass 0 0 8 0 0 0 0

#> 9: 61 twoclass 0 0 60 0 0 0 0

#> 10: 58 twoclass 0 0 57 0 0 0 0

#> 11: 11 twoclass 0 2 2 3 2 1 0

#> 12: 14 multiclass 0 2 11 0 0 0 0

#> 13: 17 multiclass 15 1 0 0 0 0 0The sonar task is an example of a binary classification problem, as the target can only take two different values, in mlr3 terminology it has the “twoclass” property:

tsk_sonar = tsk("sonar")

tsk_sonar

#> <TaskClassif:sonar> (208 x 61): Sonar: Mines vs. Rocks

#> * Target: Class

#> * Properties: twoclass

#> * Features (60):

#> - dbl (60): V1, V10, V11, V12, V13, V14, V15, V16, V17, V18, V19, V2,

#> V20, V21, V22, V23, V24, V25, V26, V27, V28, V29, V3, V30, V31,

#> V32, V33, V34, V35, V36, V37, V38, V39, V4, V40, V41, V42, V43,

#> V44, V45, V46, V47, V48, V49, V5, V50, V51, V52, V53, V54, V55,

#> V56, V57, V58, V59, V6, V60, V7, V8, V9tsk_sonar$class_names

#> [1] "M" "R"In contrast, tsk("penguins") is a multiclass problem as there are more than two species of penguins; it has the “multiclass” property:

tsk_penguins = tsk("penguins")

tsk_penguins$properties

#> [1] "multiclass"

tsk_penguins$class_names

#> [1] "Adelie" "Chinstrap" "Gentoo"A further difference between these tasks is that binary classification tasks have an extra field called $positive, which defines the ‘positive’ class. In binary classification, as there are only two possible class types, by convention one of these is known as the ‘positive’ class, and the other as the ‘negative’ class. It is arbitrary which is which, though often the more ‘important’ (and often smaller) class is set as the positive class. You can set the positive class during or after construction. If no positive class is specified then mlr3 assumes the first level in the target column is the positive class, which can lead to misleading results.

这两种任务之间的另一个区别是,二分类任务有一个额外的字段称为

$positive,它定义了“正类”(positive class)。在二分类问题中,由于只有两种可能的类别类型,按照惯例,其中一种被称为“正类”,另一种被称为“负类”。哪个是哪个是任意的,尽管通常更“重要”(通常更小)的类别被设置为正类。您可以在构建期间或之后设置正类。如果未指定正类,则mlr3假定目标列中的第一个级别是正类,这可能导致误导性的结果。

Sonar = tsk_sonar$data()

tsk_classif = as_task_classif(Sonar, target = "Class", positive = "R")

tsk_classif$positive

#> [1] "R"# changing after construction

tsk_classif$positive = "M"

tsk_classif$positive

#> [1] "M"2.5.3 LearnerClassif and MeasureClassif

Classification learners, which inherit from LearnerClassif, have nearly the same interface as regression learners. However, a key difference is that the possible predictions in classification are either "response" – predicting an observation’s class (a penguin’s species in our example, this is sometimes called “hard labeling”) – or "prob" – predicting a vector of probabilities, also called “posterior probabilities”, of an observation belonging to each class. In classification, the latter can be more useful as it provides information about the confidence of the predictions:

分类学习器(继承自

LearnerClassif)几乎具有与回归学习器相同的接口。然而,分类中的一个关键区别是,分类问题中可能的预测结果要么是"response"(预测观测的类别,例如我们示例中的企鹅物种,有时称为“硬标签”),要么是"prob"(预测属于每个类别的概率向量,也称为“后验概率”)。在分类中,后者可能更有用,因为它提供了有关预测的置信度信息:

lrn_rpart = lrn("classif.rpart", predict_type = "prob")

lrn_rpart$train(tsk_penguins, splits$train)

prediction = lrn_rpart$predict(tsk_penguins, splits$test)

prediction

#> <PredictionClassif> for 113 observations:

#> row_ids truth response prob.Adelie prob.Chinstrap prob.Gentoo

#> 2 Adelie Adelie 0.97029703 0.02970297 0.00000000

#> 4 Adelie Adelie 0.97029703 0.02970297 0.00000000

#> 7 Adelie Adelie 0.97029703 0.02970297 0.00000000

#> ---

#> 338 Chinstrap Chinstrap 0.04651163 0.93023256 0.02325581

#> 341 Chinstrap Adelie 0.97029703 0.02970297 0.00000000

#> 344 Chinstrap Chinstrap 0.04651163 0.93023256 0.02325581Also, the interface for classification measures, which are of class MeasureClassif, is identical to regression measures. The key difference in usage is that you will need to ensure your selected measure evaluates the prediction type of interest. To evaluate “response” predictions, you will need measures with predict_type = "response", or to evaluate probability predictions you will need predict_type = "prob". The easiest way to find these measures is by filtering the mlr_measures dictionary:

此外,分类度量标准的接口,其类别为

MeasureClassif,与回归度量标准完全相同。在使用上的主要区别在于,您需要确保所选的度量标准评估感兴趣的预测类型。要评估“response”预测,您需要使用predict_type = "response"的度量标准,或者要评估概率预测,您需要使用predict_type = "prob"的度量标准。查找这些度量标准的最简单方法是通过筛选mlr_measures字典:

as.data.table(msr())[

task_type == "classif" & predict_type == "prob" &

!sapply(task_properties, \(x) "twoclass" %in% x)

]

#> Key: <key>

#> key label task_type

#> <char> <char> <char>

#> 1: classif.logloss Log Loss classif

#> 2: classif.mauc_au1p Weighted average 1 vs. 1 multiclass AUC classif

#> 3: classif.mauc_au1u Average 1 vs. 1 multiclass AUC classif

#> 4: classif.mauc_aunp Weighted average 1 vs. rest multiclass AUC classif

#> 5: classif.mauc_aunu Average 1 vs. rest multiclass AUC classif

#> 6: classif.mbrier Multiclass Brier Score classif

#> packages predict_type task_properties

#> <list> <char> <list>

#> 1: mlr3,mlr3measures prob

#> 2: mlr3,mlr3measures prob

#> 3: mlr3,mlr3measures prob

#> 4: mlr3,mlr3measures prob

#> 5: mlr3,mlr3measures prob

#> 6: mlr3,mlr3measures prob2.5.4 PredictionClassif, Confusion Matrix, and Thresholding

PredictionClassif objects have two important differences from their regression analog. Firstly, the added field $confusion, and secondly the added method $set_threshold().

PredictionClassif对象与其回归模型的预测对象有两个重要的区别。首先是新增的字段$confusion,其次是新增的方法$set_threshold()。

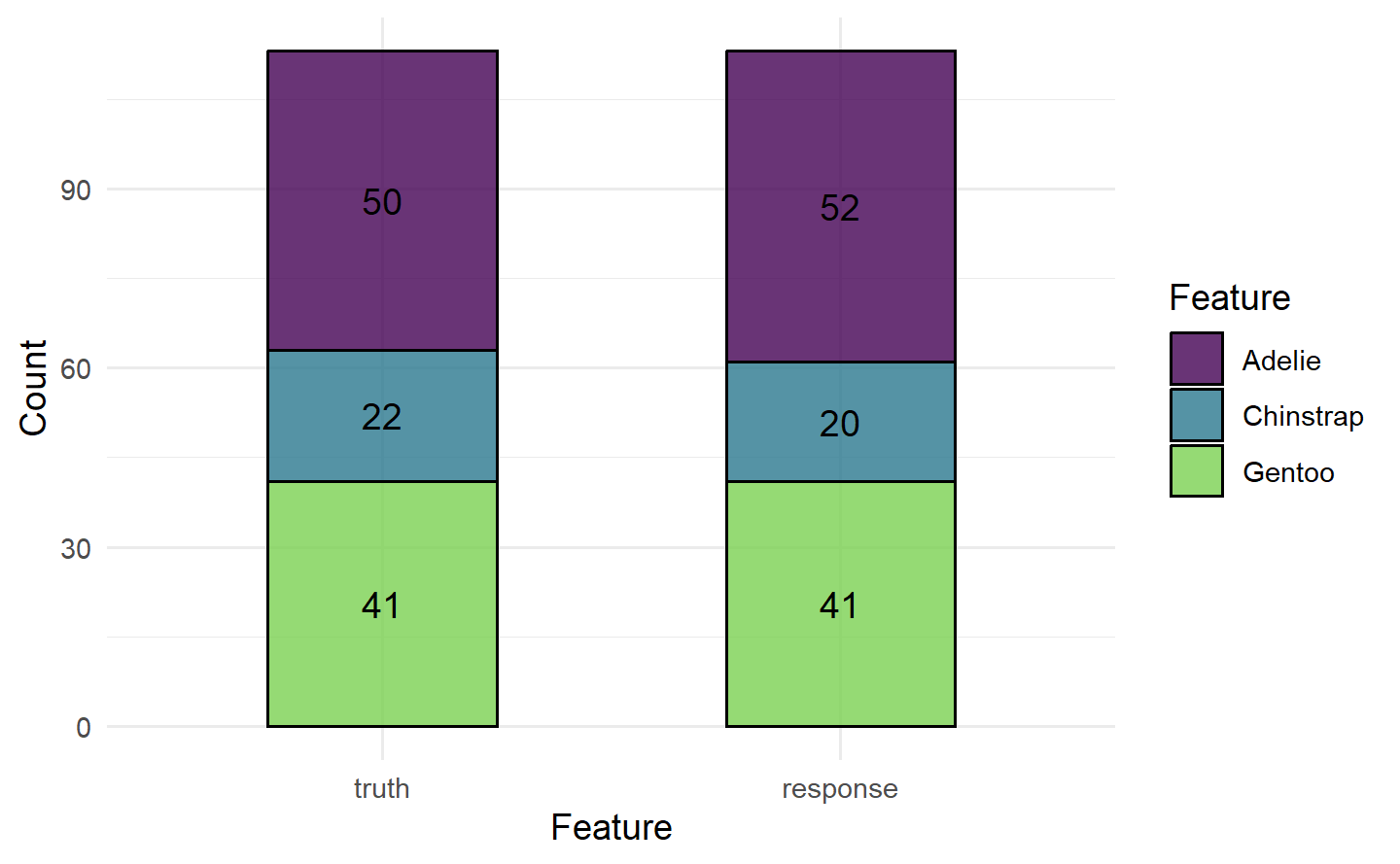

2.5.4.1 Confusion Matrix

prediction$confusion

#> truth

#> response Adelie Chinstrap Gentoo

#> Adelie 49 3 0

#> Chinstrap 1 18 1

#> Gentoo 0 1 40The rows in a confusion matrix are the predicted class and the columns are the true class. All off-diagonal entries are incorrectly classified observations, and all diagonal entries are correctly classified. In this case, the classifier does fairly well classifying all penguins, but we could have found that it only classifies the Adelie species well but often conflates Chinstrap and Gentoo, for example.

混淆矩阵中的行表示预测的类别,列表示真实的类别。所有非对角线条目都是被错误分类的观测值,而所有对角线条目都是被正确分类的。在这种情况下,分类器在对所有企鹅进行分类时表现得相当不错,但我们也可能发现它只能很好地对 Adelie 物种进行分类,但经常将 Chinstrap 和 Gentoo 混为一谈。

autoplot(prediction)

In the binary classification case, the top left entry corresponds to true positives, the top right to false positives, the bottom left to false negatives and the bottom right to true negatives. Taking tsk_sonar as an example with M as the positive class:

在二分类情况下,左上角的条目对应于真正例(true positives),右上角对应于假正例(false positives),左下角对应于假负例(false negatives),右下角对应于真负例(true negatives)。以

tsk_sonar为例,M为正类:

splits = partition(tsk_sonar)

lrn_rpart$

train(tsk_sonar, splits$train)$

predict(tsk_sonar, splits$test)$

confusion

#> truth

#> response M R

#> M 27 10

#> R 10 222.5.4.2 Thresholding

阈值化

This 50% value is known as the threshold and it can be useful to change this threshold if there is class imbalance (when one class is over- or under-represented in a dataset), or if there are different costs associated with classes, or simply if there is a preference to ‘over’-predict one class. As an example, let us take tsk("german_credit") in which 700 customers have good credit and 300 have bad. Now we could easily build a model with around “70%” accuracy simply by always predicting a customer will have good credit:

这个 50% 的值被称为阈值,如果数据集中存在类别不平衡(即一个类别在数据集中过多或过少出现),或者不同的类别具有不同的成本,或者只是有一种“过度”预测一种类别的倾向,那么更改这个阈值可能会很有用。举个例子,让我们看看

tsk("german_credit"),其中有 700 个客户信用良好,300 个客户信用不良。现在,我们可以很容易地构建一个模型,总是预测客户会有良好的信用,从而获得 “70%” 左右的准确性:

task_credit = tsk("german_credit")

lrn_featureless = lrn("classif.featureless", predict_type = "prob")

splits = partition(task_credit)

lrn_featureless$train(task_credit, splits$train)

prediction = lrn_featureless$predict(task_credit, splits$test)

prediction$score(msr("classif.acc"))

#> classif.acc

#> 0.7TODO:等待后续添加交叉引用 13.1

While this model may appear to have good performance on the surface, in fact, it just ignores all ‘bad’ customers – this can create big problems in this finance example, as well as in healthcare tasks and other settings where false positives cost more than false negatives (see Section 13.1 for cost-sensitive classification).

Thresholding allows classes to be selected with a different probability threshold, so instead of predicting that a customer has bad credit if P(good) < 50%, we might predict bad credit if P(good) < 70% – notice how we write this in terms of the positive class, which in this task is ‘good’. Let us see this in practice:

虽然这个模型表面上看起来性能不错,但实际上它只是忽略了所有“不良”的客户 - 这在金融示例以及在医疗任务和其他一些情况下可能会带来很大问题,特别是在假阳性的成本高于假阴性的情况下(请参见第13.1节的成本敏感分类)。

阈值化允许使用不同的概率阈值选择类别,因此,与其在P(好) < 50%时预测客户信用不良,我们可以在P(好) < 70%时预测客户信用不良。请注意,我们是根据正类别来表示这一点,而在这个任务中正类别是“好”。让我们看看实际应用中的情况:

prediction$set_threshold(0.7)

prediction$score(msr("classif.acc"))

#> classif.acc

#> 0.5393939prediction$set_threshold(0.7)

prediction$score(msr("classif.acc"))

#> classif.acc

#> 0.6878788

prediction$confusion

#> truth

#> response good bad

#> good 181 53

#> bad 50 463 Evaluation and Benchmarking

Resampling Does Not Avoid Model Overfitting: A common misunderstanding is that holdout and other more advanced resampling strategies can prevent model overfitting. In fact, these methods just make overfitting visible as we can separately evaluate train/test performance. Resampling strategies also allow us to make (nearly) unbiased estimations of the generalization error.

重采样不能避免模型过拟合:一个常见的误解是,留出策略和其他更高级的重采样策略可以防止模型过拟合。实际上,这些方法只是使过拟合问题更加显而易见,因为我们可以单独评估训练/测试性能。重采样策略还允许我们对泛化误差进行(几乎)无偏估计。

3.1 Holdout and Scoring

In practice, one would usually create an intermediate model, which is trained on a subset of the available data and then tested on the remainder of the data. The performance of this intermediate model, obtained by comparing the model predictions to the ground truth, is an estimate of the generalization performance of the final model, which is the model fitted on all data.

在实践中,通常会创建一个中间模型,该模型在可用数据的子集上进行训练,然后在剩余的数据上进行测试。通过将模型的预测与真实情况进行比较,中间模型的性能可以作为最终模型的泛化性能的估计。最终模型是在所有可用数据上训练的模型。

3.2 Resampling

3.2.1 Constructing a Resampling Strategy

as.data.table(rsmp())

#> Key: <key>

#> key label params iters

#> <char> <char> <list> <int>

#> 1: bootstrap Bootstrap ratio,repeats 30

#> 2: custom Custom Splits NA

#> 3: custom_cv Custom Split Cross-Validation NA

#> 4: cv Cross-Validation folds 10

#> 5: holdout Holdout ratio 1

#> 6: insample Insample Resampling 1

#> 7: loo Leave-One-Out NA

#> 8: repeated_cv Repeated Cross-Validation folds,repeats 100

#> 9: subsampling Subsampling ratio,repeats 30rsmp("holdout", ratio = .8)

#> <ResamplingHoldout>: Holdout

#> * Iterations: 1

#> * Instantiated: FALSE

#> * Parameters: ratio=0.8When a "Resampling" object is constructed, it is simply a definition for how the data splitting process will be performed on the task when running the resampling strategy. However, it is possible to manually instantiate a resampling strategy, i.e., generate all train-test splits, by calling the $instantiate() method on a given task.

当构建一个

"Resampling"对象时,它只是对在运行重采样策略时如何执行数据拆分过程的定义。然而,可以通过在给定任务上调用$instantiate()方法来手动实例化一个重采样策略,即生成所有的训练-测试拆分。

cv3$instantiate(tsk_penguins)

# first 5 observations in first traininng set

cv3$train_set(1)[1:5]

#> [1] 2 4 5 6 8

# fitst 5 observations in thirt test set

cv3$test_set(3)[1:5]

#> [1] 1 9 12 17 20When the aim is to fairly compare multiple learners, best practice dictates that all learners being compared use the same training data to build a model and that they use the same test data to evaluate the model performance. Resampling strategies are instantiated automatically for you when using the resample() method. Therefore, manually instantiating resampling strategies is rarely required but might be useful for debugging or digging deeper into a model’s performance.

当目标是公平比较多个学习器时,最佳实践要求所有进行比较的学习器都使用相同的训练数据来构建模型,并且它们使用相同的测试数据来评估模型性能。在使用

resample()方法时,重采样策略会自动为您实例化。因此,手动实例化重采样策略很少是必需的,但在调试或深入研究模型性能时可能会有用。

3.2.2 Resampling Experiments

The resample() function takes a given Task, Learner, and Resampling object to run the given resampling strategy. resample() repeatedly fits a model on training sets, makes predictions on the corresponding test sets and stores them in a ResampleResult object, which contains all the information needed to estimate the generalization performance.

resample() 函数接受给定的任务(Task)、学习器(Learner)和重采样(Resampling)对象,以运行给定的重采样策略。resample() 函数会在训练集上反复拟合模型,在相应的测试集上进行预测,并将预测结果存储在 ResampleResult 对象中,该对象包含了估算泛化性能所需的所有信息。

rr = resample(tsk_penguins, lrn_rpart, cv3)rr

#> <ResampleResult> with 3 resampling iterations

#> task_id learner_id resampling_id iteration warnings errors

#> penguins classif.rpart cv 1 0 0

#> penguins classif.rpart cv 2 0 0

#> penguins classif.rpart cv 3 0 0# calculate the score for each iteration

acc = rr$score(msr("classif.ce"))

acc[, .(iteration, classif.ce)]

#> iteration classif.ce

#> <int> <num>

#> 1: 1 0.04347826

#> 2: 2 0.09565217

#> 3: 3 0.06140351# aggregated score across all resampling iterations

rr$aggregate(msr("classif.ce"))

#> classif.ce

#> 0.06684465By default, the majority of measures will aggregate scores using a macro average, which first calculates the measure in each resampling iteration separately, and then averages these scores across all iterations. However, it is also possible to aggregate scores using a micro average, which pools predictions across resampling iterations into one Prediction object and then computes the measure on this directly:

默认情况下,大多数性能度量会使用宏平均(macro average)来汇总分数,它首先在每个重采样迭代中分别计算度量,然后在所有迭代中对这些分数进行平均。但也可以使用微平均(micro average)来汇总分数,它将重采样迭代中的预测汇总到一个

Prediction对象中,然后直接在该对象上计算度量:

rr$aggregate(msr("classif.ce", average = "micro"))

#> classif.ce

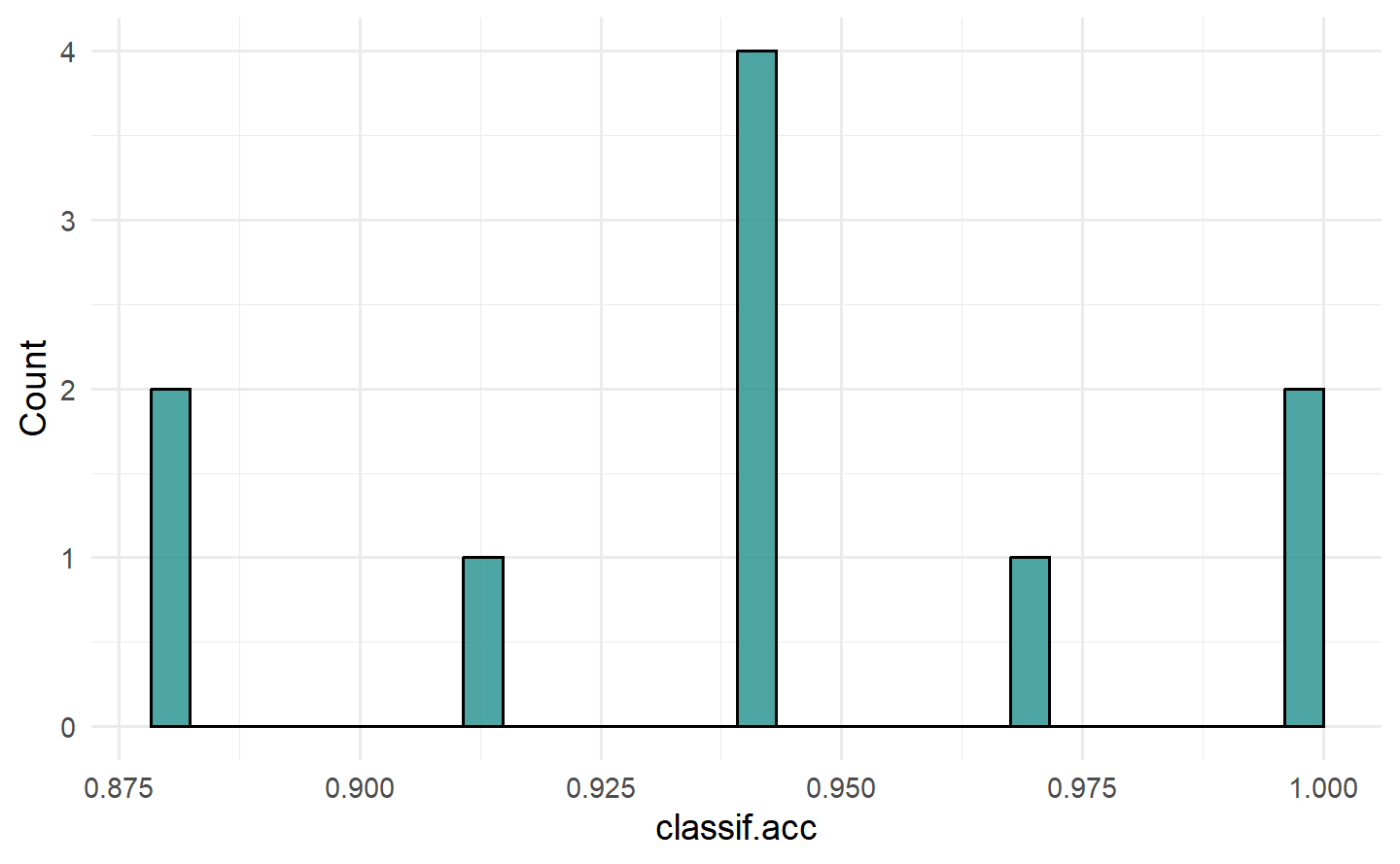

#> 0.06686047To visualize the resampling results, you can use the autoplot.ResampleResult() function to plot scores across folds as boxplots or histograms (Figure 3.1). Histograms can be useful to visually gauge the variance of the performance results across resampling iterations, whereas boxplots are often used when multiple learners are compared side-by-side (see Section 3.3).

要可视化重采样结果,您可以使用

autoplot.ResampleResult()函数绘制跨折叠的分数箱线图或直方图(Figure 3.1)。直方图可以用于直观评估跨重采样迭代的性能结果方差,而箱线图通常用于比较多个学习器并排放置在一起时(请参阅 Section 3.3)。

autoplot(rr, measure = msr("classif.acc"), type = "boxplot")

autoplot(rr, measure = msr("classif.acc"), type = "histogram")

3.2.3 ResampleResult Objects

# list of prediction objects

rrp = rr$predictions()

# print first two

rrp[1:2]

#> [[1]]

#> <PredictionClassif> for 35 observations:

#> row_ids truth response

#> 7 Adelie Adelie

#> 20 Adelie Chinstrap

#> 32 Adelie Adelie

#> ---

#> 326 Chinstrap Chinstrap

#> 330 Chinstrap Chinstrap

#> 337 Chinstrap Chinstrap

#>

#> [[2]]

#> <PredictionClassif> for 35 observations:

#> row_ids truth response

#> 1 Adelie Adelie

#> 5 Adelie Adelie

#> 9 Adelie Adelie

#> ---

#> 334 Chinstrap Chinstrap

#> 339 Chinstrap Chinstrap

#> 340 Chinstrap ChinstrapBy default, the intermediate models produced at each resampling iteration are discarded after the prediction step to reduce memory consumption of the ResampleResult object (only the predictions are required to calculate most performance measures). However, it can sometimes be useful to inspect, compare, or extract information from these intermediate models. We can configure the resample() function to keep the fitted intermediate models by setting store_models = TRUE. Each model trained in a specific resampling iteration can then be accessed via $learners[[i]]$model, where i refers to the i-th resampling iteration:

默认情况下,在进行预测步骤后,每个重新采样迭代产生的中间模型都会被丢弃,以降低

ResampleResult对象的内存消耗(大多数性能指标仅需要预测)。然而,有时候检查、比较或从这些中间模型中提取信息可能是有用的。我们可以通过设置store_models = TRUE来配置resample()函数以保留拟合的中间模型。然后,可以通过$learners[[i]]$model来访问在特定重新采样迭代中训练的每个模型,其中i指的是第i个重新采样迭代:

rr = resample(tsk_penguins, lrn_rpart, cv3, store_models = TRUE)# get the model from the first iteration

rr$learners[[1]]$model

#> n= 229

#>

#> node), split, n, loss, yval, (yprob)

#> * denotes terminal node

#>

#> 1) root 229 130 Adelie (0.432314410 0.205240175 0.362445415)

#> 2) flipper_length< 206.5 142 45 Adelie (0.683098592 0.309859155 0.007042254)

#> 4) bill_length< 44.65 97 3 Adelie (0.969072165 0.030927835 0.000000000) *

#> 5) bill_length>=44.65 45 4 Chinstrap (0.066666667 0.911111111 0.022222222) *

#> 3) flipper_length>=206.5 87 5 Gentoo (0.022988506 0.034482759 0.942528736) *In this example, we could then inspect the most important variables in each iteration to help us learn more about the respective fitted models:

# print 2nd and 3rd iteration

lapply(rr$learners[2:3], \(x) x$model$variable.importance)

#> [[1]]

#> flipper_length bill_length bill_depth body_mass island

#> 88.52870 88.07438 71.51814 67.04826 55.13690

#>

#> [[2]]

#> bill_length flipper_length bill_depth body_mass island

#> 82.18794 75.92820 66.94285 57.14539 50.290493.3 Benchmarking

3.3.1 benchmark()

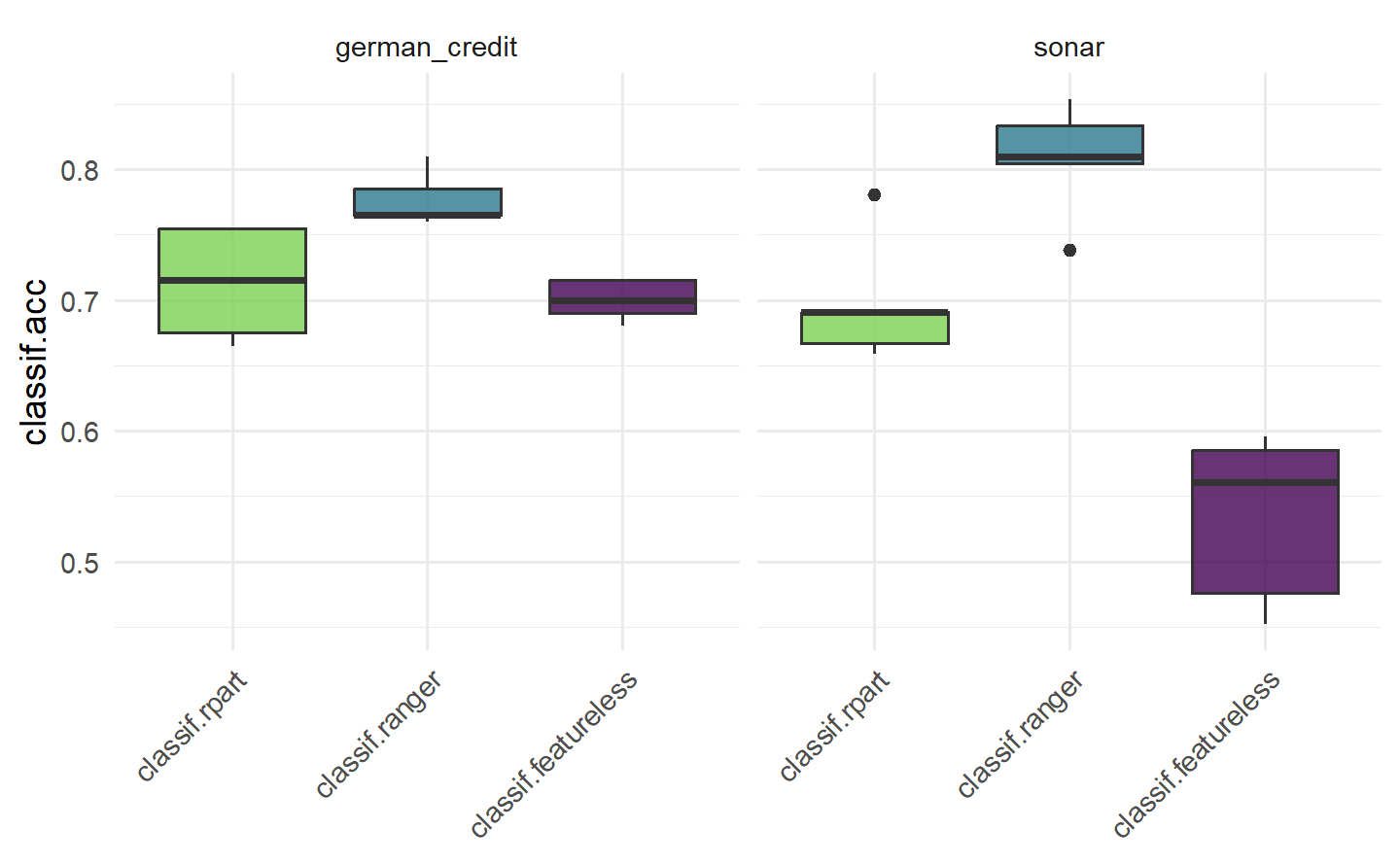

Benchmark experiments in mlr3 are conducted with benchmark(), which simply runs resample() on each task and learner separately, then collects the results. The provided resampling strategy is automatically instantiated on each task to ensure that all learners are compared against the same training and test data.

To use the benchmark() function we first call benchmark_grid(), which constructs an exhaustive design to describe all combinations of the learners, tasks and resamplings to be used in a benchmark experiment, and instantiates the resampling strategies.

mlr3中的基准实验是使用benchmark()函数进行的,该函数简单地在每个任务和学习器上分别运行resample(),然后收集结果。提供的重新采样策略会自动在每个任务上进行实例化,以确保所有学习器都与相同的训练和测试数据进行比较。要使用

benchmark()函数,我们首先调用benchmark_grid()函数,该函数构建一个详尽的设计来描述在基准实验中要使用的所有学习器、任务和重新采样的组合,并实例化重新采样策略。

tasks = tsks(c("german_credit", "sonar"))

learners = lrns(c("classif.rpart", "classif.ranger", "classif.featureless"),

predict_type = "prob")

rsmp_cv5 = rsmp("cv", folds = 5)

design = benchmark_grid(tasks, learners, rsmp_cv5)

design

#> task learner resampling

#> <char> <char> <char>

#> 1: german_credit classif.rpart cv

#> 2: german_credit classif.ranger cv

#> 3: german_credit classif.featureless cv

#> 4: sonar classif.rpart cv

#> 5: sonar classif.ranger cv

#> 6: sonar classif.featureless cvBy default, benchmark_grid() instantiates the resamplings on the tasks, which means that concrete train-test splits are generated. Since this process is stochastic, it is necessary to set a seed before calling benchmark_grid() to ensure reproducibility of the data splits.

在默认情况下,

benchmark_grid()会在任务上实例化重新采样,这意味着会生成具体的训练-测试拆分。由于这个过程是随机的,所以在调用benchmark_grid()之前需要设置一个种子,以确保数据拆分的可重现性。

# pass design to benchmark()

bmr = benchmark(design)bmr

#> <BenchmarkResult> of 30 rows with 6 resampling runs

#> nr task_id learner_id resampling_id iters warnings errors

#> 1 german_credit classif.rpart cv 5 0 0

#> 2 german_credit classif.ranger cv 5 0 0

#> 3 german_credit classif.featureless cv 5 0 0

#> 4 sonar classif.rpart cv 5 0 0

#> 5 sonar classif.ranger cv 5 0 0

#> 6 sonar classif.featureless cv 5 0 0As benchmark() is just an extension of resample(), we can once again use $score(), or $aggregate() depending on your use-case, though note that in this case $score() will return results over each fold of each learner/task/resampling combination.

由于

benchmark()只是resample()的扩展,因此我们可以再次使用$score()或$aggregate(),具体取决于您的用例,但请注意,在这种情况下,$score()将返回每个学习器/任务/重新采样组合的每个折叠的结果。

bmr$score()[c(1, 7, 13), .(iteration, task_id, learner_id, classif.ce)]

#> iteration task_id learner_id classif.ce

#> <int> <char> <char> <num>

#> 1: 1 german_credit classif.rpart 0.335

#> 2: 2 german_credit classif.ranger 0.240

#> 3: 3 german_credit classif.featureless 0.300bmr$aggregate()[, .(task_id, learner_id, classif.ce)]

#> task_id learner_id classif.ce

#> <char> <char> <num>

#> 1: german_credit classif.rpart 0.2870000

#> 2: german_credit classif.ranger 0.2230000

#> 3: german_credit classif.featureless 0.3000000

#> 4: sonar classif.rpart 0.3026713

#> 5: sonar classif.ranger 0.1921022

#> 6: sonar classif.featureless 0.4659698TODO:等待后续添加交叉引用 11.3

This would conclude a basic benchmark experiment where you can draw tentative conclusions about model performance, in this case we would possibly conclude that the random forest is the best of all three models on each task. We draw conclusions cautiously here as we have not run any statistical tests or included standard errors of measures, so we cannot definitively say if one model outperforms the other.

As the results of $score() and $aggregate() are returned in a data.table, you can post-process and analyze the results in any way you want. A common mistake is to average the learner performance across all tasks when the tasks vary significantly. This is a mistake as averaging the performance will miss out important insights into how learners compare on ‘easier’ or more ‘difficult’ predictive problems. A more robust alternative to compare the overall algorithm performance across multiple tasks is to compute the ranks of each learner on each task separately and then calculate the average ranks. This can provide a better comparison as task-specific ‘quirks’ are taken into account by comparing learners within tasks before comparing them across tasks. However, using ranks will lose information about the numerical differences between the calculated performance scores. Analysis of benchmark experiments, including statistical tests, is covered in more detail in Section 11.3.

这将总结了一个基本的基准实验,您可以初步得出关于模型性能的结论,在这种情况下,我们可能会得出结论,随机森林在每个任务上都是三个模型中最好的。我们在这里谨慎地得出结论,因为我们没有进行任何统计测试,也没有包括性能度量的标准错误,因此我们不能明确地说一个模型是否优于另一个。

由于

$score()和$aggregate()的结果以data.table返回,您可以以任何您想要的方式进行后处理和分析结果。一个常见的错误是在任务差异明显的情况下,对所有任务的学习器性能进行平均。这是一个错误,因为对性能进行平均将错过对学习器在“更容易”或“更困难”的预测问题上的比较重要的洞察。比较多个任务上的整体算法性能的更强大的替代方法是分别计算每个任务上每个学习器的排名,然后计算平均排名。这可以提供更好的比较,因为通过在比较任务之前在任务内部比较学习器,可以考虑到特定于任务的“怪癖”。然而,使用排名会丢失关于计算的性能分数之间的数值差异的信息。关于基准实验的分析,包括统计测试,在第11.3节中将更详细地介绍。

3.3.2 BenchmarkResult Objects

A BenchmarkResult object is a collection of multiple ResampleResult objects.

bmrdt = as.data.table(bmr)

bmrdt[1:2, .(task, learner, resampling, iteration)]

#> task learner

#> <list> <list>

#> 1: <TaskClassif:german_credit> <LearnerClassifRpart:classif.rpart>

#> 2: <TaskClassif:german_credit> <LearnerClassifRpart:classif.rpart>

#> resampling iteration

#> <list> <int>

#> 1: <ResamplingCV> 1

#> 2: <ResamplingCV> 2rr1 = bmr$resample_result(1)

rr2 = bmr$resample_result(2)

rr1

#> <ResampleResult> with 5 resampling iterations

#> task_id learner_id resampling_id iteration warnings errors

#> german_credit classif.rpart cv 1 0 0

#> german_credit classif.rpart cv 2 0 0

#> german_credit classif.rpart cv 3 0 0

#> german_credit classif.rpart cv 4 0 0

#> german_credit classif.rpart cv 5 0 0In addition, as_benchmark_result() can be used to convert objects from ResampleResult to BenchmarkResult. The c()-method can be used to combine multiple BenchmarkResult objects, which can be useful when conducting experiments across multiple machines:

此外,可以使用

as_benchmark_result()将ResampleResult对象转换为BenchmarkResult。c()方法可用于组合多个BenchmarkResult对象,这在跨多台计算机进行实验时非常有用:

bmr1 = as_benchmark_result(rr1)

bmr2 = as_benchmark_result(rr2)

c(bmr1, bmr2)

#> <BenchmarkResult> of 10 rows with 2 resampling runs

#> nr task_id learner_id resampling_id iters warnings errors

#> 1 german_credit classif.rpart cv 5 0 0

#> 2 german_credit classif.ranger cv 5 0 0Boxplots are most commonly used to visualize benchmark experiments as they can intuitively summarize results across tasks and learners simultaneously.

箱线图最常用于可视化基准实验,因为它们可以直观地同时总结任务和学习器之间的结果。

3.4 Evaluation of Binary Classifiers

3.4.1 Confusion Matrix

It is possible for a classifier to have a good classification accuracy but to overlook the nuances provided by a full confusion matrix, as in the following tsk("german_credit") example:

tsk_german = tsk("german_credit")

lrn_ranger = lrn("classif.ranger", predict_type = "prob")

splits = partition(tsk_german, ratio = .8)

lrn_ranger$train(tsk_german, splits$train)

prediction = lrn_ranger$predict(tsk_german, splits$test)

prediction$score(msr("classif.acc"))

#> classif.acc

#> 0.74

prediction$confusion

#> truth

#> response good bad

#> good 124 36

#> bad 16 24On their own, the absolute numbers in a confusion matrix can be less useful when there is class imbalance. Instead, several normalized measures can be derived (Figure 3.3):

True Positive Rate (TPR), Sensitivity or Recall: How many of the true positives did we predict as positive?

True Negative Rate (TNR) or Specificity: How many of the true negatives did we predict as negative?

False Positive Rate (FPR), or \(1 -\) Specificity: How many of the true negatives did we predict as positive?

Positive Predictive Value (PPV) or Precision: If we predict positive how likely is it a true positive?

Negative Predictive Value (NPV): If we predict negative how likely is it a true negative?

Accuracy (ACC): The proportion of correctly classified instances out of the total number of instances.

F1-score: The harmonic mean of precision and recall, which balances the trade-off between precision and recall. It is calculated as \(2 \times \frac{Precision \times Recall}{Precision + Recall}\).

The mlr3measures package allows you to compute several common confusion matrix-based measures using the confusion_matrix() function:

mlr3measures::confusion_matrix(

truth = prediction$truth,

response = prediction$response,

positive = tsk_german$positive

)

#> truth

#> response good bad

#> good 124 36

#> bad 16 24

#> acc : 0.7400; ce : 0.2600; dor : 5.1667; f1 : 0.8267

#> fdr : 0.2250; fnr : 0.1143; fomr: 0.4000; fpr : 0.6000

#> mcc : 0.3273; npv : 0.6000; ppv : 0.7750; tnr : 0.4000

#> tpr : 0.88573.4.2 ROC Analysis

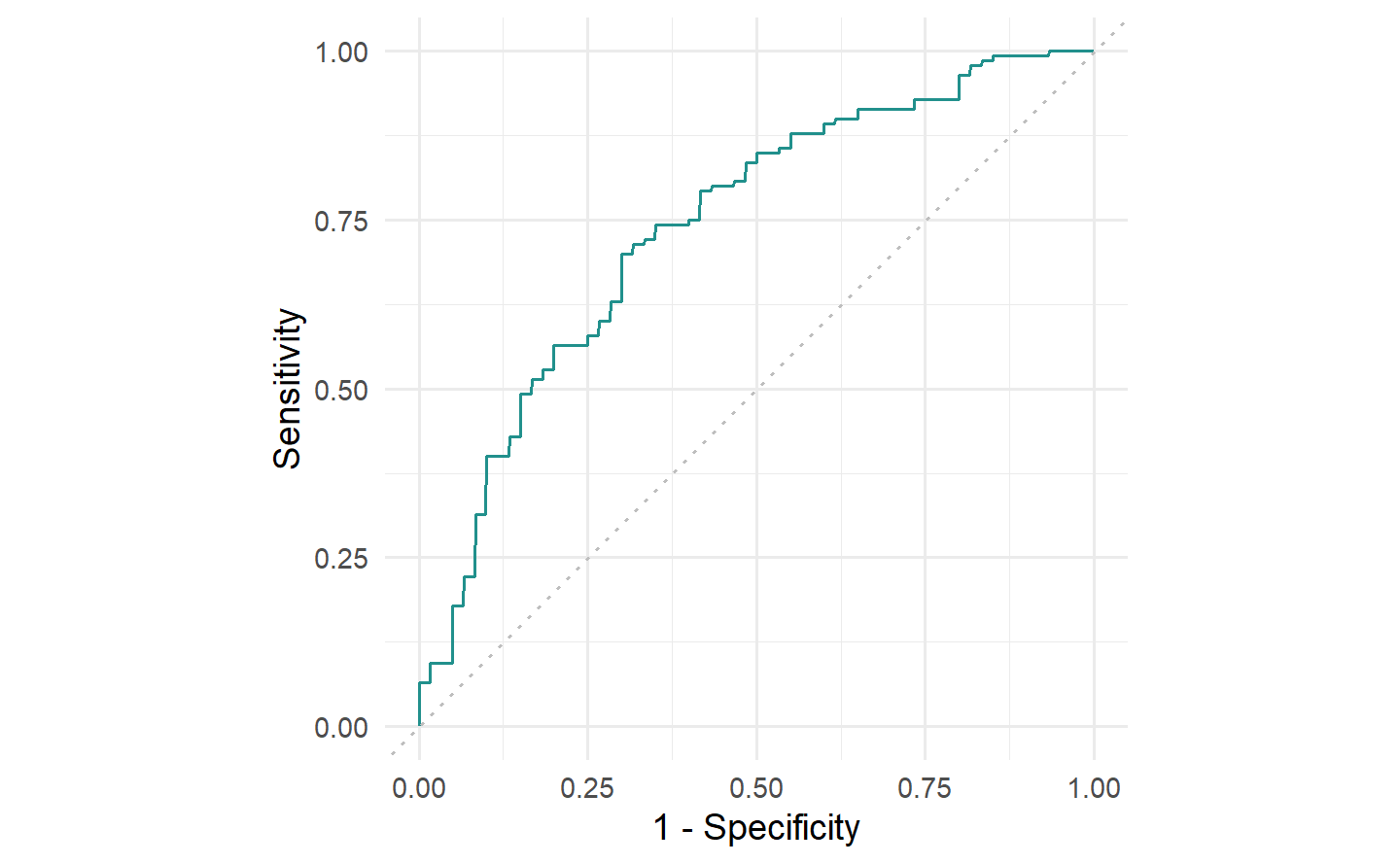

The ROC curve is a line graph with TPR on the y-axis and the FPR on the x-axis.

Consider classifiers that predict probabilities instead of discrete classes. Using different thresholds to cut off predicted probabilities and assign them to the positive and negative class will lead to different TPRs and FPRs and by plotting these values across different thresholds we can characterize the behavior of a binary classifier – this is the ROC curve.

考虑预测概率而不是离散类别的分类器。使用不同的阈值来截断预测的概率并将其分配到正类别和负类别将导致不同的 TPR 和 FPR,并通过在不同的阈值上绘制这些值,我们可以表征二元分类器的行为 - 这就是 ROC 曲线。

autoplot(prediction, type = "roc")

german_credit dataset and the classif.ranger random forest learner. Recall FPR = \(1 -\) Specificity and TPR = Sensitivity.

A natural performance measure that can be derived from the ROC curve is the area under the curve (AUC), implemented in msr("classif.auc"). The AUC can be interpreted as the probability that a randomly chosen positive instance has a higher predicted probability of belonging to the positive class than a randomly chosen negative instance. Therefore, higher values (closer to ) indicate better performance. Random classifiers (such as the featureless baseline) will always have an AUC of (approximately, when evaluated empirically) 0.5.

从 ROC 曲线中可以导出的一个自然性能度量是曲线下面积(AUC),在

msr("classif.auc")中实现。AUC 可以解释为随机选择的正实例具有较高的预测概率,属于正类别,而不是随机选择的负实例的概率。因此,较高的值(越接近 1)表示更好的性能。随机分类器(例如没有特征的基线)的AUC总是为(在经验上评估时约为 0.5)。

prediction$score(msr("classif.auc"))

#> classif.auc

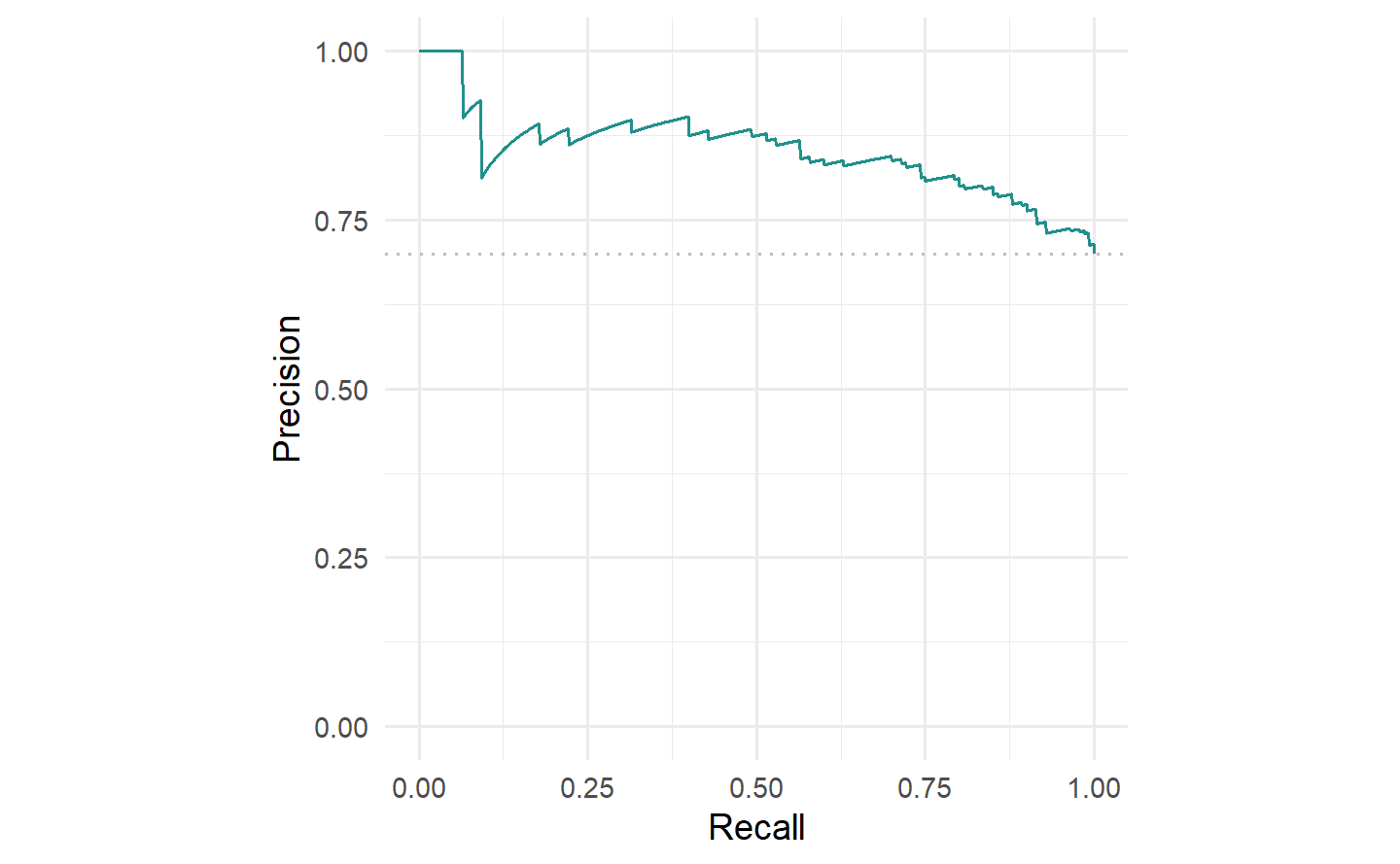

#> 0.7407143We can also plot the precision-recall curve (PRC) which visualizes the PPV/precision vs. TPR/recall. The main difference between ROC curves and PR curves is that the number of true-negatives are ignored in the latter. This can be useful in imbalanced populations where the positive class is rare, and where a classifier with high TPR may still not be very informative and have low PPV. See Davis and Goadrich (2006) for a detailed discussion about the relationship between the PRC and ROC curves.

我们还可以绘制精确度-召回曲线(PRC),该曲线可视化了 PPV/精确度 与 TPR/召回 之间的关系。ROC曲线和PR曲线之间的主要区别在于后者忽略了真负例的数量。在不平衡的人群中,正类别很少见的情况下,具有高TPR的分类器可能仍然不太具有信息性,并且具有较低的PPV。有关PRC和ROC曲线之间关系的详细讨论,请参阅 Davis 和 Goadrich(2006)。

autoplot(prediction, type = "prc")

tsk("german_credit") and lrn("classif.ranger").

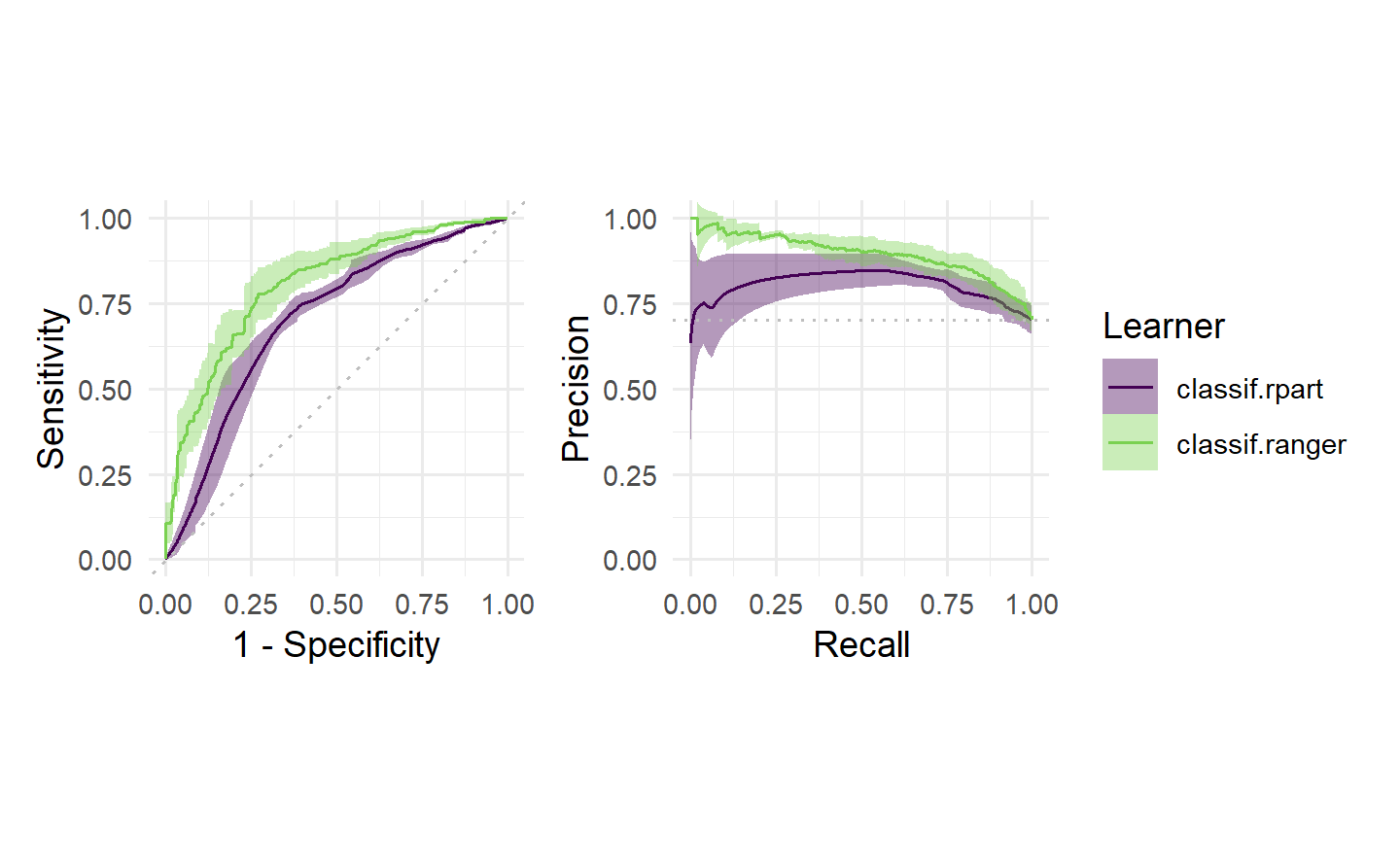

Finally, we can visualize ROC/PR curves for a BenchmarkResult to compare multiple learners on the same Task:

autoplot(bmr, type = "roc") +

autoplot(bmr, type = "prc") +

plot_layout(guides = "collect")

Tuning and Feature Selection

4 Hyperparameter Optimization

Hyperparameter optimization (HPO) closely relates to model evaluation (Chapter 3) as the objective is to find a hyperparameter configuration that optimizes the generalization performance. Broadly speaking, we could think of finding the optimal model configuration in the same way as selecting a model from a benchmark experiment, where in this case each model in the experiment is the same algorithm but with different hyperparameter configurations. For example, we could benchmark three support vector machines (SVMs) with three different cost values.

HPO与模型评估(Chapter 3)密切相关,因为目标是找到一个优化泛化性能的超参数配置。从广义上讲,我们可以将找到最佳模型配置视为从基准实验中选择模型的方式,其中在这种情况下,实验中的每个模型都是相同的算法,但具有不同的超参数配置。例如,我们可以使用三个不同

cost值来进行支持向量机(SVM)的基准测试。

4.1 Model Tuning

mlr3tuning is the hyperparameter optimization package of the mlr3 ecosystem. At the heart of the package are the R6 classes

TuningInstanceSingleCrit, a tuning ‘instance’ that describes the optimization problem and store the results; andTunerwhich is used to configure and run optimization algorithms.

4.1.1 Learner and Search Space

as.data.table(lrn("classif.svm")$param_set)[,

.(id, class, lower, upper, nlevels)]

#> id class lower upper nlevels

#> <char> <char> <num> <num> <num>

#> 1: cachesize ParamDbl -Inf Inf Inf

#> 2: class.weights ParamUty NA NA Inf

#> 3: coef0 ParamDbl -Inf Inf Inf

#> 4: cost ParamDbl 0 Inf Inf

#> 5: cross ParamInt 0 Inf Inf

#> 6: decision.values ParamLgl NA NA 2

#> 7: degree ParamInt 1 Inf Inf

#> 8: epsilon ParamDbl 0 Inf Inf

#> 9: fitted ParamLgl NA NA 2

#> 10: gamma ParamDbl 0 Inf Inf

#> 11: kernel ParamFct NA NA 4

#> 12: nu ParamDbl -Inf Inf Inf

#> 13: scale ParamUty NA NA Inf

#> 14: shrinking ParamLgl NA NA 2

#> 15: tolerance ParamDbl 0 Inf Inf

#> 16: type ParamFct NA NA 2learner = lrn("classif.svm",

type = "C-classification",

kernel = "radial",

cost = to_tune(1e-1, 1e5),

gamma = to_tune(1e-1, 1))

learner

#> <LearnerClassifSVM:classif.svm>: Support Vector Machine

#> * Model: -

#> * Parameters: type=C-classification, kernel=radial,

#> cost=<RangeTuneToken>, gamma=<RangeTuneToken>

#> * Packages: mlr3, mlr3learners, e1071

#> * Predict Types: [response], prob

#> * Feature Types: logical, integer, numeric

#> * Properties: multiclass, twoclass4.1.2 Terminator

mlr3tuning includes many methods to specify when to terminate an algorithm (Table 4.1), which are implemented in Terminator classes. Terminators are stored in the mlr_terminators dictionary and are constructed with the sugar function trm().

mlr3tuning at the time of publication, their function call and default parameters. A complete and up-to-date list can be found at https://mlr-org.com/terminators.html.

| Terminator | Function call and default parameters |

|---|---|

| Clock Time | trm("clock_time") |

| Combo | trm("combo", any = TRUE) |

| None | trm("none") |

| Number of Evaluations | trm("evals", n_evals = 100, k = 0) |

| Performance Level | trm("perf_reached", level = 0.1) |

| Run Time | trm("run_time", secs = 30) |

| Stagnation | trm("stagnation", iters = 10, threshold = 0) |

The most commonly used terminators are those that stop the tuning after a certain time (trm("run_time")) or a given number of evaluations (trm("evals")). Choosing a runtime is often based on practical considerations and intuition. Using a time limit can be important on compute clusters where a maximum runtime for a compute job may need to be specified. trm("perf_reached") stops the tuning when a specified performance level is reached, which can be helpful if a certain performance is seen as sufficient for the practical use of the model, however, if this is set too optimistically the tuning may never terminate. trm("stagnation") stops when no progress greater than the threshold has been made for a set number of iterations. The threshold can be difficult to select as the optimization could stop too soon for complex search spaces despite room for (possibly significant) improvement. trm("none") is used for tuners that control termination themselves and so this terminator does nothing. Finally, any of these terminators can be freely combined by using trm("combo"), which can be used to specify if HPO finishes when any (any = TRUE) terminator is triggered or when all (any = FALSE) are triggered.

最常用的终止条件通常是那些在一定时间(

trm("run_time"))或给定的评估次数(trm("evals"))之后停止调优的条件。选择运行时间通常基于实际考虑和直觉。在计算集群上使用时间限制可能很重要,因为可能需要为计算作业指定最大运行时间。trm("perf_reached")在达到指定性能水平时停止调优,这可以在某种性能被视为足够实际使用的情况下很有帮助,但如果设置得过于乐观,调优可能永远不会结束。trm("stagnation")在一定迭代次数内没有超过阈值的进展时停止,阈值的选择可能很困难,因为尽管可能有改进的空间(可能很大),但对于复杂的搜索空间,优化可能会过早停止。trm("none")用于控制自己终止的调谐器,因此该终止条件什么也不做。最后,任何这些终止条件都可以通过使用trm("combo")自由组合,可以用来指定HPO是否在任何(any = TRUE)终止条件触发时结束,或者在所有(any = FALSE)终止条件触发时结束。

4.1.3 Tuning Instance with ti

The tuning instance collects the tuner-agnostic information required to optimize a model, i.e., all information about the tuning process, except for the tuning algorithm itself. This includes the task to tune over, the learner to tune, the resampling method and measure used to analytically compare hyperparameter optimization configurations, and the terminator to determine when the measure has been optimized ‘enough’. This implicitly defines a “black box” objective function, mapping hyperparameter configurations to (stochastic) performance values, to be optimized. This concept will be revisited in Chapter 5.

调优实例收集了优化模型所需的与调谐器无关的信息,即所有与调优过程有关的信息,除了调谐算法本身。这包括要调优的任务、要调优的学习器、用于分析比较超参数优化配置的重抽样方法和度量,以及确定度量何时已经被优化到足够程度的终止条件。这隐式地定义了一个“黑盒”目标函数,将超参数配置映射到(随机的)性能值,以便进行优化。这个概念将在 Chapter 5 中重新讨论。

tsk_sonar = tsk("sonar")

instance = ti(

task = tsk_sonar,

learner = learner,

resampling = rsmp("cv", folds = 3),

measures = msr("classif.ce"),

terminator = trm("none")

)

instance

#> <TuningInstanceSingleCrit>

#> * State: Not optimized

#> * Objective: <ObjectiveTuning:classif.svm_on_sonar>

#> * Search Space:

#> id class lower upper nlevels

#> <char> <char> <num> <num> <num>

#> 1: cost ParamDbl 0.1 1e+05 Inf

#> 2: gamma ParamDbl 0.1 1e+00 Inf

#> * Terminator: <TerminatorNone>4.1.4 Tuner

With all the pieces of our tuning problem assembled, we can now decide how to tune our model. There are multiple Tuner classes in mlr3tuning, which implement different HPO (or more generally speaking black box optimization) algorithms (Table 4.2).

mlr3tuning, their function call and the package in which the algorithm is implemented. A complete and up-to-date list can be found at https://mlr-org.com/tuners.html.

| Tuner | Function call | Package |

|---|---|---|

| Random Search | tnr("random_search") |

mlr3tuning |

| Grid Search | tnr("grid_search") |

mlr3tuning |

| Bayesian Optimization | tnr("mbo") |

mlr3mbo |

| CMA-ES | tnr("cmaes") |

adagio |

| Iterated Racing | tnr("irace") |

irace |

| Hyperband | tnr("hyperband") |

mlr3hyperband |

| Generalized Simulated Annealing | tnr("gensa") |

GenSA |

| Nonlinear Optimization | tnr("nloptr") |

nloptr |

4.1.4.1 Search Strategies

Grid search and random search (Bergstra and Bengio 2012) are the most basic algorithms and are often selected first in initial experiments. The idea of grid search is to exhaustively evaluate every possible combination of given hyperparameter values. Categorical hyperparameters are usually evaluated over all possible values they can take. Numeric and integer hyperparameter values are then spaced equidistantly in their box constraints (upper and lower bounds) according to a given resolution, which is the number of distinct values to try per hyperparameter. Random search involves randomly selecting values for each hyperparameter independently from a pre-specified distribution, usually uniform. Both methods are non-adaptive, which means each proposed configuration ignores the performance of previous configurations. Due to their simplicity, both grid search and random search can handle mixed search spaces (i.e., hyperparameters can be numeric, integer, or categorical) as well as hierarchical search spaces (Section 4.4).

网格搜索和随机搜索(Bergstra和Bengio 2012)是最基本的算法,通常在初始实验中首选。网格搜索的思想是详尽地评估给定超参数值的每种可能组合。通常会对分类超参数评估它们可以取的所有可能值。然后,数值和整数超参数值将根据给定的分辨率均匀分布在它们的箱约束(上下界)中,分辨率是每个超参数要尝试的不同值的数量。随机搜索涉及从预先指定的分布(通常是均匀分布)中独立地随机选择每个超参数的值。这两种方法都是非自适应的,这意味着每个提出的配置都忽略了先前配置的性能。由于它们的简单性,网格搜索和随机搜索可以处理混合搜索空间(即,超参数可以是数值、整数或分类的)以及分层搜索空间(Section 4.4)。

4.1.4.2 Adaptive Algorithms

Adaptive algorithms learn from previously evaluated configurations to find good configurations quickly, examples in mlr3 include Bayesian optimization (also called model-based optimization), Covariance Matrix Adaptation Evolution Strategy (CMA-ES), Iterated Racing, and Hyperband.

Bayesian optimization (e.g., Snoek, Larochelle, and Adams 2012) describes a family of iterative optimization algorithms that use a surrogate model to approximate the unknown function that is to be optimized – in HPO this would be the mapping from a hyperparameter configuration to the estimated generalization performance. If a suitable surrogate model is chosen, e.g. a random forest, Bayesian optimization can be quite flexible and even handle mixed and hierarchical search spaces. Bayesian optimization is discussed in full detail in Section 5.4.

CMA-ES (Hansen and Auger 2011) is an evolutionary strategy that maintains a probability distribution over candidate points, with the distribution represented by a mean vector and covariance matrix. A new set of candidate points is generated by sampling from this distribution, with the probability of each candidate being proportional to its performance. The covariance matrix is adapted over time to reflect the performance landscape. Further evolutionary strategies are available in mlr3 via the miesmuschel package, however, these will not be covered in this book.

Racing algorithms work by iteratively discarding configurations that show poor performance, as determined by statistical tests. Iterated Racing (López-Ibáñez et al. 2016) starts by ‘racing’ down an initial population of randomly sampled configurations from a parameterized density and then uses the surviving configurations of the race to stochastically update the density of the subsequent race to focus on promising regions of the search space, and so on.

Multi-fidelity HPO is an adaptive method that leverages the predictive power of computationally cheap lower fidelity evaluations (i.e., poorer quality predictions such as those arising from neural networks with a small number of epochs) to improve the overall optimization efficiency. This concept is used in Hyperband (Li et al. 2018), a popular multi-fidelity hyperparameter optimization algorithm that dynamically allocates increasingly more resources to promising configurations and terminates low-performing ones. Hyperband is discussed in full detail in Section 5.3.

Other implemented algorithms for numeric search spaces are Generalized Simulated Annealing (Xiang et al. 2013; Tsallis and Stariolo 1996) and various nonlinear optimization algorithms.

自适应算法通过学习先前评估的配置来快速找到良好的配置,

mlr3中的示例包括贝叶斯优化(也称为基于模型的优化)、协方差矩阵自适应进化策略(CMA-ES)、迭代比赛和Hyperband。贝叶斯优化(例如,Snoek、Larochelle和Adams 2012)描述了一族迭代优化算法,这些算法使用替代模型来近似待优化的未知函数——在HPO中,这将是从超参数配置到估计的泛化性能的映射。如果选择了合适的替代模型,例如随机森林,贝叶斯优化可以非常灵活,甚至可以处理混合和分层搜索空间。贝叶斯优化将在 Section 5.4 中详细讨论。

CMA-ES(Hansen和Auger 2011)是一种进化策略,它维护了候选点的概率分布,分布由均值向量和协方差矩阵表示。通过从该分布中抽样生成一组新的候选点,每个候选点的选择概率与其性能成正比。协方差矩阵会随着时间的推移而适应反映性能景观。通过

mlr3中的miesmuschel包,还提供了其他进化策略,不过本书不会涵盖这些内容。比赛算法通过迭代地丢弃显示性能较差的配置,这是通过统计测试确定的。迭代比赛(López-Ibáñez等人2016)首先通过从参数化密度中随机抽样生成的一组初始配置进行“比赛”,然后使用比赛的生存配置来随机更新后续比赛的密度,以便集中在搜索空间的有前途的区域,依此类推。

多保真度HPO是一种自适应方法,利用计算成本低的低保真度评估(即质量较差的预测,例如由具有较少周期的神经网络产生的预测)来提高整体优化效率。这个概念在Hyperband(Li等人2018)中得到了应用,这是一种流行的多保真度超参数优化算法,动态分配更多资源给有前途的配置并终止性能较低的配置。Hyperband将在 Section 5.3 中详细讨论。

对于数值搜索空间,其他已实现的算法包括广义模拟退火(Xiang等人2013;Tsallis和Stariolo 1996)和各种非线性优化算法。

4.1.4.3 Choosing Strategies

As a rule of thumb, if the search space is small or does not have a complex structure, grid search may be able to exhaustively evaluate the entire search space in a reasonable time. However, grid search is generally not recommended due to the curse of dimensionality – the grid size ‘blows up’ very quickly as the number of parameters to tune increases – and insufficient coverage of numeric search spaces. By construction, grid search cannot evaluate a large number of unique values per hyperparameter, which is suboptimal when some hyperparameters have minimal impact on performance while others do. In such scenarios, random search is often a better choice as it considers more unique values per hyperparameter compared to grid search.

For higher-dimensional search spaces or search spaces with more complex structure, more guided optimization algorithms such as evolutionary strategies or Bayesian optimization tend to perform better and are more likely to result in peak performance. When choosing between evolutionary strategies and Bayesian optimization, the cost of function evaluation is highly relevant. If hyperparameter configurations can be evaluated quickly, evolutionary strategies often work well. On the other hand, if model evaluations are time-consuming and the optimization budget is limited, Bayesian optimization is usually preferred, as it is quite sample efficient compared to other algorithms, i.e., less function evaluations are needed to find good configurations. Hence, Bayesian optimization is usually recommended for HPO. While the optimization overhead of Bayesian optimization is comparably large (e.g., in each iteration, training of the surrogate model and optimizing the acquisition function), this has less of an impact in the context of relatively costly function evaluations such as resampling of ML models.

Finally, in cases where the hyperparameter optimization problem involves a meaningful fidelity parameter (e.g., number of epochs, number of trees, number of boosting rounds) and where the optimization budget needs to be spent efficiently, multi-fidelity hyperparameter optimization algorithms like Hyperband may be worth considering. For further details on different tuners and practical recommendations, we refer to Bischl et al. (2023).

作为一个经验法则,如果搜索空间较小或没有复杂的结构,网格搜索可能能够在合理的时间内详尽地评估整个搜索空间。然而,通常不建议使用网格搜索,因为维度的诅咒问题——随着要调整的参数数量的增加,网格大小会迅速增加——以及对数值搜索空间的不足覆盖。从构造上来说,网格搜索不能评估每个超参数的大量唯一值,这在某些超参数对性能影响较小而其他超参数对性能有显著影响的情况下是不够优化的。在这种情况下,随机搜索通常是更好的选择,因为它考虑了每个超参数的更多唯一值,相对于网格搜索而言。

对于维度较高的搜索空间或搜索空间具有更复杂结构的情况,更有导向性的优化算法,如进化策略或贝叶斯优化,往往表现更好,并更有可能产生最佳性能。在选择进化策略和贝叶斯优化之间,函数评估成本非常重要。如果可以快速评估超参数配置,通常进化策略效果良好。另一方面,如果模型评估需要耗费时间,且优化预算有限,通常首选贝叶斯优化,因为与其他算法相比,它相对高效,即需要更少的函数评估来找到好的配置。因此,通常建议在HPO中使用贝叶斯优化。虽然贝叶斯优化的优化开销相对较大(例如,在每个迭代中,训练替代模型和优化获取函数),但在相对昂贵的函数评估环境中,例如ML模型的重新抽样,这影响较小。

最后,在超参数优化问题涉及有意义的保真度参数(例如,周期数、树数、提升轮数)且需要高效利用优化预算的情况下,可能值得考虑使用多保真度超参数优化算法,例如Hyperband。关于不同调谐器和实际建议的更多详细信息,请参阅Bischl等人(2023)。

tuner = tnr("grid_search", resolution = 5, batch_size = 10)

tuner

#> <TunerGridSearch>: Grid Search

#> * Parameters: resolution=5, batch_size=10

#> * Parameter classes: ParamLgl, ParamInt, ParamDbl, ParamFct

#> * Properties: dependencies, single-crit, multi-crit

#> * Packages: mlr3tuningTODO:等待后续添加交叉引用 10.1.3

For our SVM example, we will use a grid search with a resolution of five for runtime reasons here (in practice a larger resolution would be preferred). The resolution is the number of distinct values to try per hyperparameter, which means in our example the tuner will construct a 5x5 grid of 25 configurations of equally spaced points between the specified upper and lower bounds. All configurations will be tried by the tuner (in random order) until either all configurations are evaluated or the terminator (Section 4.1.2) signals that the budget is exhausted. For grid and random search tuners, the batch_size parameter controls how many configurations are evaluated at the same time when parallelization is enabled (see Section 10.1.3), and also determines how many configurations should be applied before the terminator should check if the termination criterion has been reached.

对于我们的SVM示例,出于运行时的原因,我们将使用具有五个分辨率的网格搜索(在实践中,更大的分辨率将更可取)。分辨率是每个超参数要尝试的不同值的数量,这意味着在我们的示例中,调谐器将构建一个5x5的网格,其中包含25个在指定上限和下限之间等间距点的配置。调谐器将尝试所有配置(以随机顺序),直到所有配置都被评估或终止器(Section 4.1.2)发出预算已用尽的信号。对于网格搜索和随机搜索调谐器,

batch_size参数控制在启用并行化时同时评估多少个配置(请参阅第10.1.3节),并确定在终止器检查是否达到终止标准之前应用多少个配置。

tuner$param_set

#> <ParamSet>

#> id class lower upper nlevels

#> <char> <char> <num> <num> <num>

#> 1: batch_size ParamInt 1 Inf Inf

#> 2: resolution ParamInt 1 Inf Inf

#> 3: param_resolutions ParamUty NA NA Inf

#> default

#> <list>

#> 1: <NoDefault>\n Public:\n clone: function (deep = FALSE) \n initialize: function ()

#> 2: <NoDefault>\n Public:\n clone: function (deep = FALSE) \n initialize: function ()

#> 3: <NoDefault>\n Public:\n clone: function (deep = FALSE) \n initialize: function ()

#> value

#> <list>

#> 1: 10

#> 2: 5

#> 3:While changing the control parameters of the tuner can improve optimal performance, we have to take care that is likely the default settings will fit most needs. While it is not possible to cover all application cases, mlr3tuning’s defaults were chosen to work well in most cases. However, some control parameters like batch_size often interact with the parallelization setup (further described in Section 10.1.3) and may need to be adjusted accordingly.

尽管更改调谐器的控制参数可以改善最优性能,但我们必须注意,通常情况下默认设置将适用于大多数需求。虽然不可能涵盖所有应用情况,但

mlr3tuning的默认设置被选择为在大多数情况下表现良好。但是,一些控制参数,如batch_size,通常与并行化设置互动(在第10.1.3节中进一步描述),可能需要相应地进行调整。

4.1.4.4 Triggering the tuning process

Now that we have introduced all our components, we can start the tuning process. To do this we simply pass the constructed TuningInstanceSingleCrit to the $optimize() method of the initialized Tuner.

tuner$optimize(instance)instance$result$learner_param_vals

#> [[1]]

#> [[1]]$type

#> [1] "C-classification"

#>

#> [[1]]$kernel

#> [1] "radial"

#>

#> [[1]]$cost

#> [1] 50000.05

#>

#> [[1]]$gamma

#> [1] 0.14.1.5 Logarithmic Transformations

To add this transformation to a hyperparameter we simply pass logscale = TRUE to to_tune().

learner = lrn("classif.svm",

cost = to_tune(1e-5, 1e5, logscale = TRUE),

gamma = to_tune(1e-5, 1e5, logscale = TRUE),

kernel = "radial",

type = "C-classification")

instance = ti(

task = tsk_sonar,

learner = learner,

resampling = rsmp("cv", folds = 3),

measures = msr("classif.ce"),

terminator = trm("none")

)

tuner$optimize(instance)Note that the fields cost and gamma show the optimal values before transformation, whereas x_domain and learner_param_vals contain optimal values after transformation, it is these latter fields you would take forward for future model use.

请注意,

cost和gamma字段显示了变换之前的最佳值,而x_domain和learner_param_vals包含了变换之后的最佳值,对于未来的模型使用,您应该使用后者的字段。

instance$result$x_domain

#> [[1]]

#> [[1]]$cost

#> [1] 1e+05

#>

#> [[1]]$gamma

#> [1] 0.0031622784.1.6 Analyzing and Using the Result

as.data.table(instance$archive)[1:3, .(cost, gamma, classif.ce)]

#> cost gamma classif.ce

#> <num> <num> <num>

#> 1: -5.756463 0.000000 0.4663216

#> 2: -5.756463 5.756463 0.4663216

#> 3: 0.000000 -11.512925 0.4663216Another powerful feature of the instance is that we can score the internal ResampleResults on a different performance measure, for example looking at false negative rate and false positive rate as well as classification error:

as.data.table(

instance$archive,

measures = msrs(c("classif.fpr", "classif.fnr"))

)[1:5, .(cost, gamma, classif.ce, classif.fpr, classif.fnr)]

#> cost gamma classif.ce classif.fpr classif.fnr

#> <num> <num> <num> <num> <num>

#> 1: -5.756463 0.000000 0.4663216 1.000000 0.0000000

#> 2: -5.756463 5.756463 0.4663216 1.000000 0.0000000

#> 3: 0.000000 -11.512925 0.4663216 1.000000 0.0000000

#> 4: 0.000000 -5.756463 0.2400966 0.277289 0.2077999

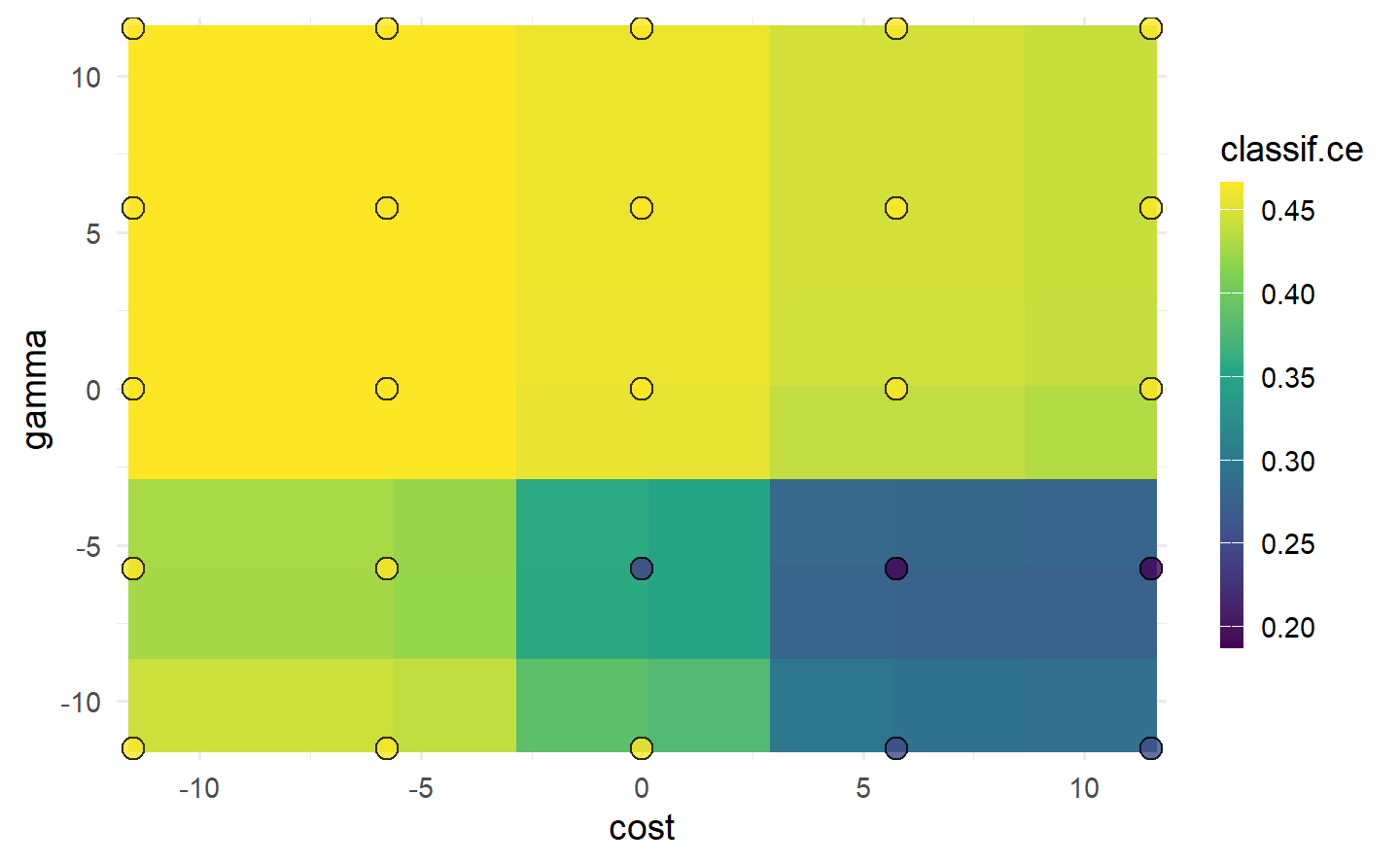

#> 5: 0.000000 11.512925 0.4663216 1.000000 0.0000000autoplot(instance, type = "surface")

cost and gamma. Bright yellow regions represent the model performing worse and dark blue performing better. We can see that high cost values and low gamma values achieve the best performance. Note that we should not directly infer the performance of new unseen values from the heatmap since it is only an interpolation based on a surrogate model (regr.ranger). However, we can see the general interaction between the hyperparameters.

Once we found good hyperparameters for our learner through tuning, we can use them to train a final model on the whole data. To do this we simply construct a new learner with the same underlying algorithm and set the learner hyperparameters to the optimal configuration:

在通过调整找到学习器的良好超参数之后,我们可以使用它们在整个数据集上训练最终模型。为此,我们只需构建一个新的学习器,使用相同的底层算法,并将学习器的超参数设置为最佳配置:

lrn_svm_tuned = lrn("classif.svm")

lrn_svm_tuned$param_set$values = instance$result_learner_param_vals

lrn_svm_tuned$train(tsk_sonar)$model

#>

#> Call:

#> svm.default(x = data, y = task$truth(), type = "C-classification",

#> kernel = "radial", gamma = 0.00316227766016838, cost = 1e+05,

#> probability = (self$predict_type == "prob"))

#>

#>

#> Parameters:

#> SVM-Type: C-classification

#> SVM-Kernel: radial

#> cost: 1e+05

#>

#> Number of Support Vectors: 93

4.2 Convenient Tuning with tune and auto_tuner

In the previous section, we looked at constructing and manually putting together the components of HPO by creating a tuning instance using ti(), passing this to the tuner, and then calling $optimize() to start the tuning process. mlr3tuning includes two helper methods to simplify this process further.

The first helper function is tune(), which creates the tuning instance and calls $optimize() for you. You may prefer the manual method with ti() if you want to view and make changes to the instance before tuning.

在上一节中,我们看到了通过使用

ti()创建调整实例,将其传递给调整器,然后调用$optimize()来启动调整过程,来构建和手动组合HPO的组件。mlr3tuning包括两个辅助方法,以进一步简化这个过程。第一个辅助函数是

tune(),它创建调整实例并为您调用$optimize()。如果您想在调整之前查看并对实例进行更改,可能更喜欢使用ti()的手动方法。

tnr_grid_search = tnr("grid_search", resolution = 5, batch_size = 5)

lrn_svm = lrn(

"classif.svm",

cost = to_tune(1e-5, 1e5, logscale = TRUE),

gamma = to_tune(1e-5, 1e5, logscale = TRUE),

kernel = "radial",

type = "C-classification"

)

rsmp_cv3 = rsmp("cv", folds = 3)

msr_ce = msr("classif.ce")

instance = tune(

tuner = tnr_grid_search,

task = tsk_sonar,

learner = lrn_svm,

resampling = rsmp_cv3,

measures = msr_ce

)

instance$resultThe other helper function is auto_tuner, which creates an object of class AutoTuner. The AutoTuner inherits from the Learner class and wraps all the information needed for tuning, which means you can treat a learner waiting to be optimized just like any other learner. Under the hood, the AutoTuner essentially runs tune() on the data that is passed to the model when $train() is called and then sets the learner parameters to the optimal configuration.

另一个辅助函数是

auto_tuner,它创建一个AutoTuner类的对象。AutoTuner继承自Learner类,并包装了所有需要进行调整的信息,这意味着您可以像处理任何其他学习器一样处理等待优化的学习器。在底层,AutoTuner实际上在调用$train()时对传递给模型的数据上运行了tune(),然后将学习器参数设置为最佳配置。

at = auto_tuner(

tuner = tnr_grid_search,

learner = lrn_svm,

resampling = rsmp_cv3,

measure = msr_ce

)

at

#> <AutoTuner:classif.svm.tuned>

#> * Model: list

#> * Search Space:

#> <ParamSet>

#> id class lower upper nlevels

#> <char> <char> <num> <num> <num>

#> 1: cost ParamDbl -11.51293 11.51293 Inf

#> 2: gamma ParamDbl -11.51293 11.51293 Inf

#> default

#> <list>

#> 1: <NoDefault>\n Public:\n clone: function (deep = FALSE) \n initialize: function ()

#> 2: <NoDefault>\n Public:\n clone: function (deep = FALSE) \n initialize: function ()

#> value

#> <list>

#> 1:

#> 2:

#> Trafo is set.

#> * Packages: mlr3, mlr3tuning, mlr3learners, e1071

#> * Predict Type: response

#> * Feature Types: logical, integer, numeric

#> * Properties: multiclass, twoclassAnd we can now call $train(), which will first tune the hyperparameters in the search space listed above before fitting the optimal model.

split = partition(tsk_sonar)

at$train(tsk_sonar, row_ids = split$train)

at$predict(tsk_sonar, row_ids = split$test)$score()The AutoTuner contains a tuning instance that can be analyzed like any other instance.

at$tuning_instance$result

#> cost gamma learner_param_vals x_domain classif.ce

#> <num> <num> <list> <list> <num>

#> 1: 5.756463 -11.51293 <list[4]> <list[2]> 0.2377428We could also pass the AutoTuner to resample() and benchmark(), which would result in a nested resampling, discussed next.

4.3 Nested Resampling

Nested resampling separates model optimization from the process of estimating the performance of the tuned model by adding an additional resampling, i.e., while model performance is estimated using a resampling method in the ‘usual way’, tuning is then performed by resampling the resampled data (Figure 4.2).

嵌套重抽样通过添加额外的重抽样来将模型优化与估计调整模型性能的过程分开,即在“通常方式”中使用重抽样方法来估计模型性能,然后通过对重抽样数据进行重抽样来进行调整(Figure 4.2)。

Figure 4.2 represents the following example of nested resampling:

Outer resampling start – Instantiate three-fold CV to create different testing and training datasets.

Inner resampling – Within the outer training data instantiate four-fold CV to create different inner testing and training datasets.

HPO – Tune the hyperparameters on the outer training set (large, light blue blocks) using the inner data splits.

Training – Fit the learner on the outer training dataset using the optimal hyperparameter configuration obtained from the inner resampling (small blocks).

Evaluation – Evaluate the performance of the learner on the outer testing data (large, dark blue block).

Outer resampling repeats – Repeat (2)-(5) for each of the three outer folds.

Aggregation – Take the sample mean of the three performance values for an unbiased performance estimate.

The inner resampling produces generalization performance estimates for each configuration and selects the optimal configuration to be evaluated on the outer resampling. The outer resampling then produces generalization estimates for these optimal configurations. The result from the outer resampling can be used for comparison to other models trained and tested on the same outer folds.

Figure 4.2 表示嵌套重抽样的以下示例:

外部重抽样开始 - 实例化三折交叉验证以创建不同的测试和训练数据集。

内部重抽样 - 在外部训练数据中实例化四折交叉验证以创建不同的内部测试和训练数据集。

HPO - 使用内部数据拆分在外部训练集(大的浅蓝色块)上调整超参数。

训练 - 使用从内部重抽样获得的最佳超参数配置在外部训练数据集上拟合学习器(小块)。

评估 - 在外部测试数据上评估学习器的性能(大的深蓝色块)。

外部重抽样重复 - 对三个外部折叠中的每一个重复步骤(2)-(5)。

聚合 - 取三个性能值的样本均值以获得无偏性能估计。

内部重抽样为每个配置生成泛化性能估计,并选择要在外部重抽样中评估的最佳配置。然后,外部重抽样为这些最佳配置生成泛化估计。外部重抽样的结果可以用于与在相同外部折叠上训练和测试的其他模型进行比较。

A common mistake is to think of nested resampling as a method to select optimal model configurations. Nested resampling is a method to compare models and to estimate the generalization performance of a tuned model, however, this is the performance based on multiple different configurations (one from each outer fold) and not performance based on a single configuration. If you are interested in identifying optimal configurations, then use tune()/ti() or auto_tuner() with $train() on the complete dataset.

一个常见的错误是将嵌套重抽样视为选择最佳模型配置的方法。嵌套重抽样是一种用于比较模型和估计调整后模型的泛化性能的方法,但这是基于多种不同配置的性能(每个配置来自于外部折叠的一个),而不是基于单个配置的性能。如果您有兴趣确定最佳配置,那么请使用

tune()/ti()或auto_tuner()与$train()在完整数据集上进行操作。

4.3.1 Nested Resampling with an AutoTuner

at = auto_tuner(

tuner = tnr_grid_search,

learner = lrn_svm,

resampling = rsmp("cv", folds = 4),

measure = msr_ce

)

rr = resample(

task = tsk_sonar,

learner = at,

resampling = rsmp_cv3,

store_models = TRUE

)

rrrr$aggregate()

#> classif.ce

#> 0.1733609extract_inner_tuning_results(rr)[,

.(iteration, cost, gamma, classif.ce)]

#> iteration cost gamma classif.ce

#> <int> <num> <num> <num>

#> 1: 1 11.51293 -5.756463 0.1573529

#> 2: 2 11.51293 -5.756463 0.1441176

#> 3: 3 11.51293 -5.756463 0.2533613extract_inner_tuning_archives(rr)[1:3,

.(iteration, cost, gamma, classif.ce)]

#> iteration cost gamma classif.ce

#> <int> <num> <num> <num>

#> 1: 1 -11.512925 5.756463 0.4310924

#> 2: 1 0.000000 0.000000 0.4310924

#> 3: 1 5.756463 -11.512925 0.26470594.3.2 The Right (and Wrong) Way to Estimate Performance

In this short section we will empirically demonstrate that directly reporting tuning performance without nested resampling results in optimistically biased performance estimates.

在这个简短的部分中,我们将通过实验证明,直接报告调优性能而不使用嵌套重抽样会导致性能估计存在乐观偏差。

lrn_xgboost = lrn(

"classif.xgboost",

eta = to_tune(1e-4, 1, logscale = TRUE),

max_depth = to_tune(1, 20),

colsample_bytree = to_tune(1e-1, 1),

colsample_bylevel = to_tune(1e-1, 1),

lambda = to_tune(1e-3, 1e3, logscale = TRUE),

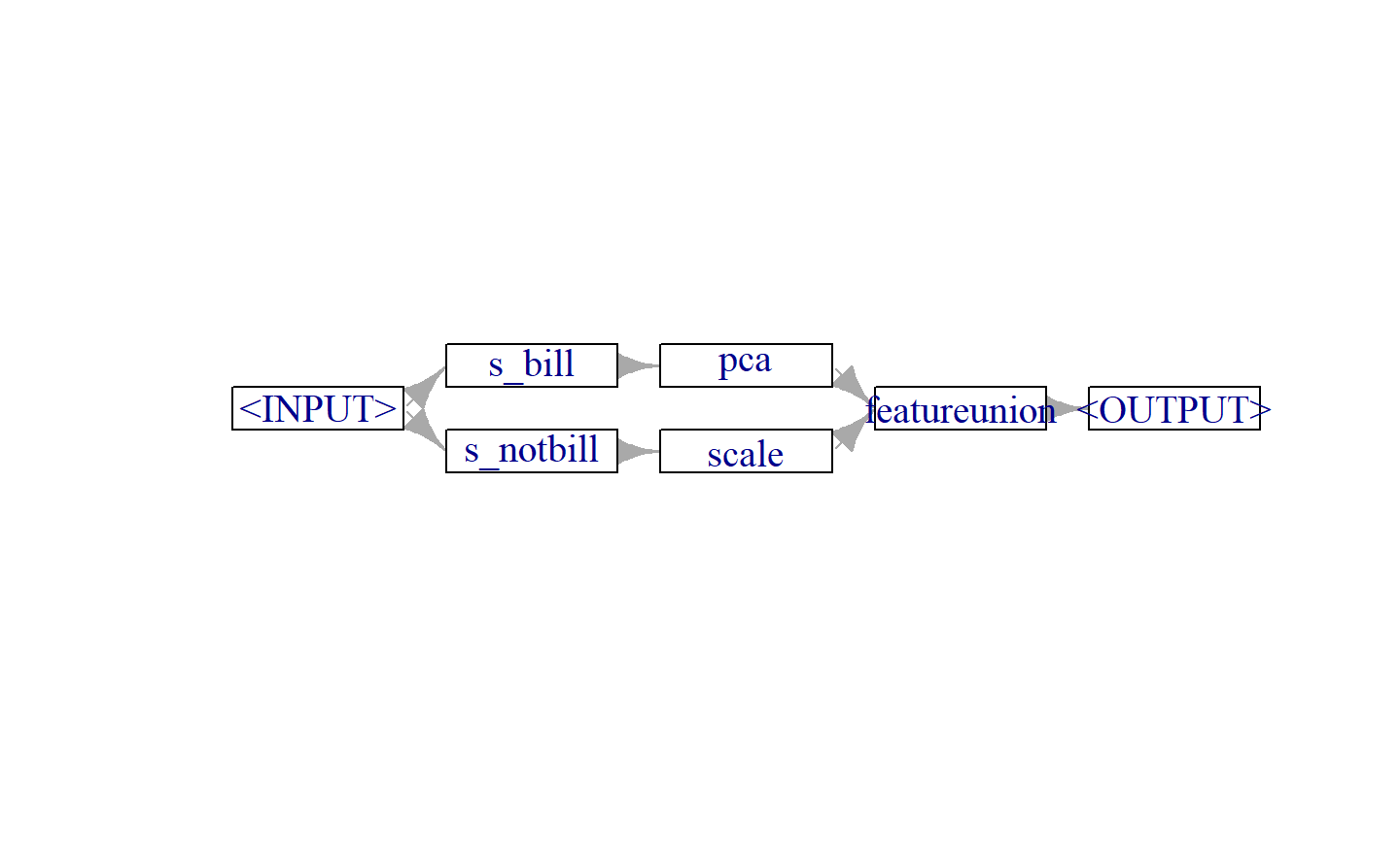

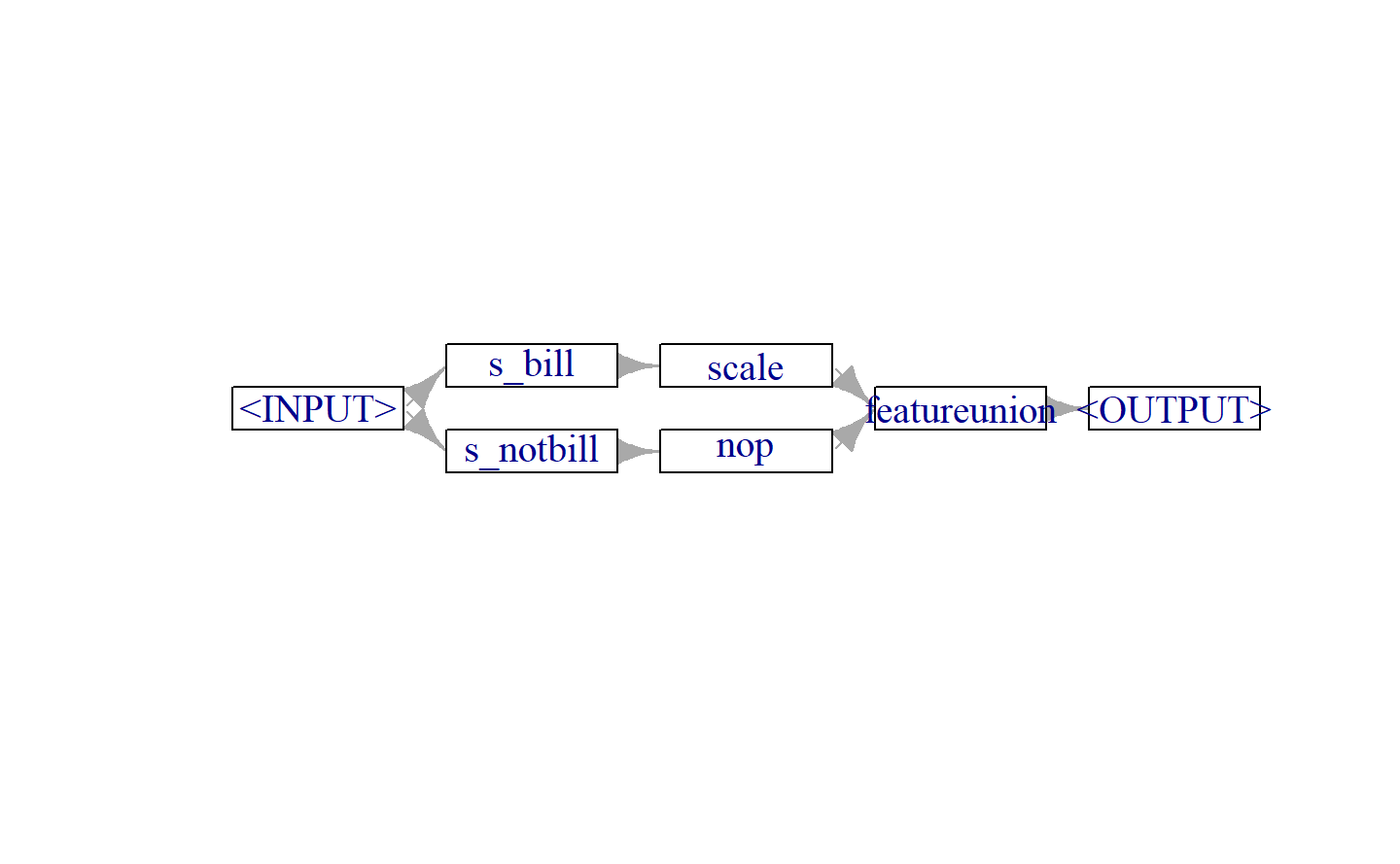

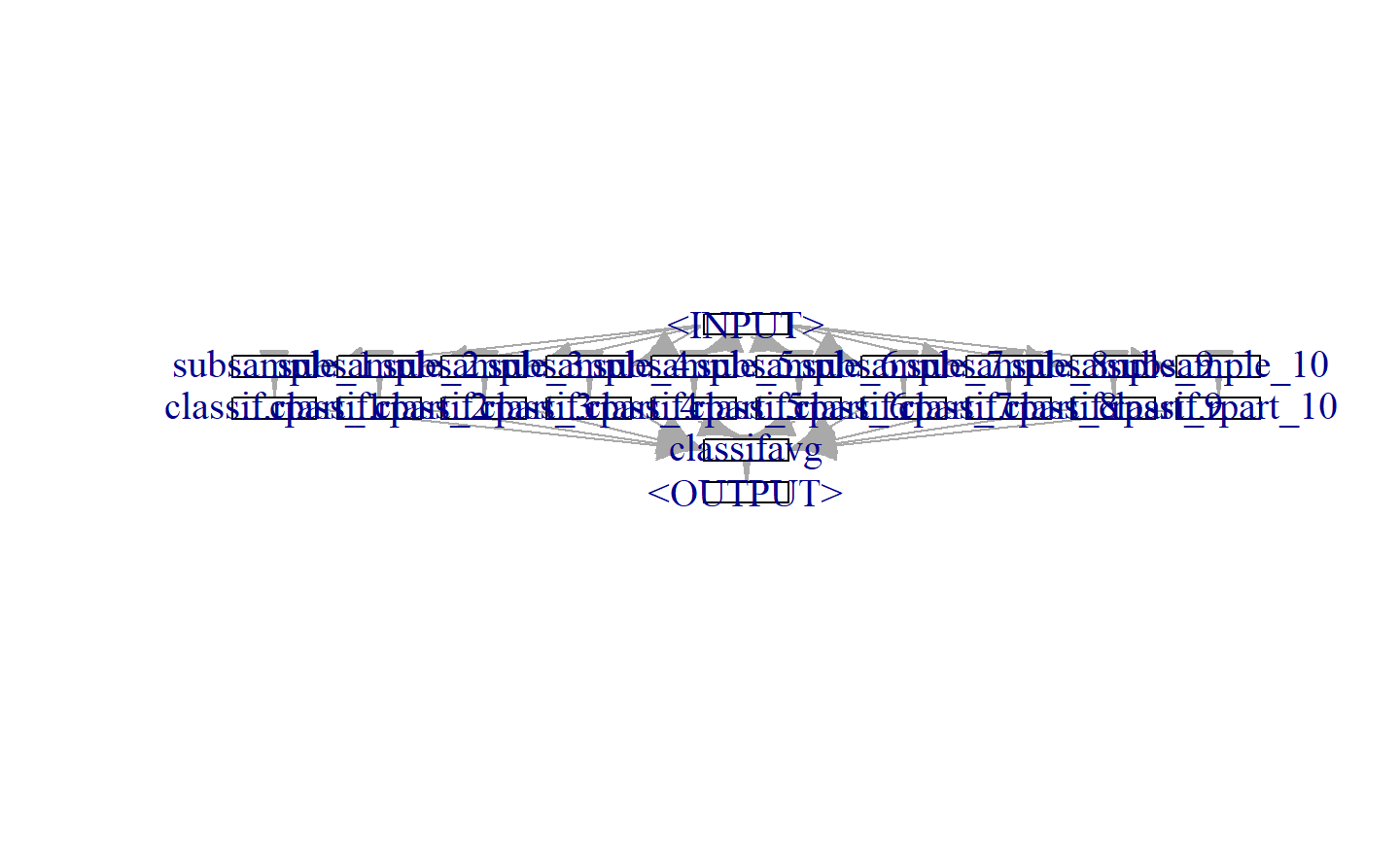

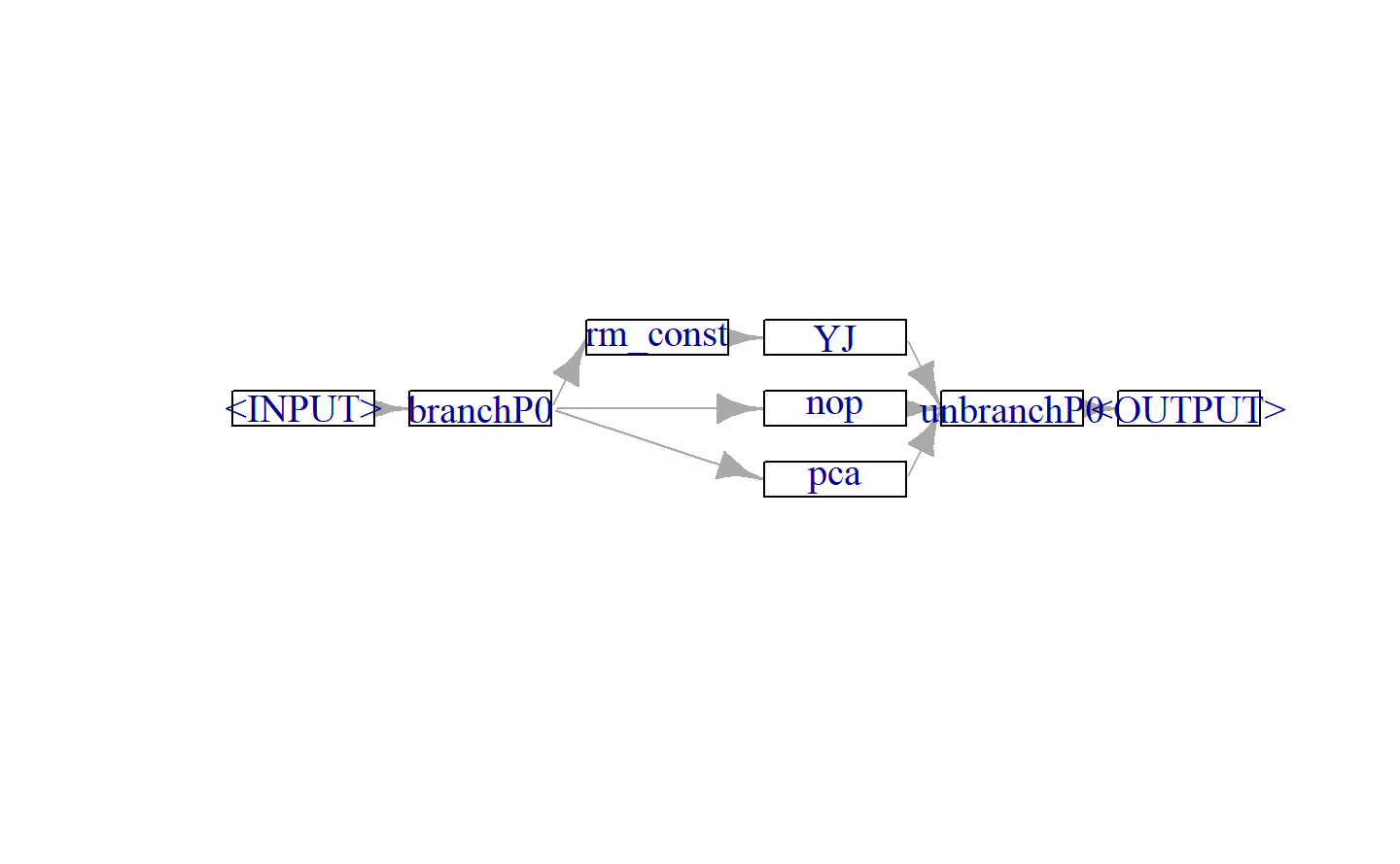

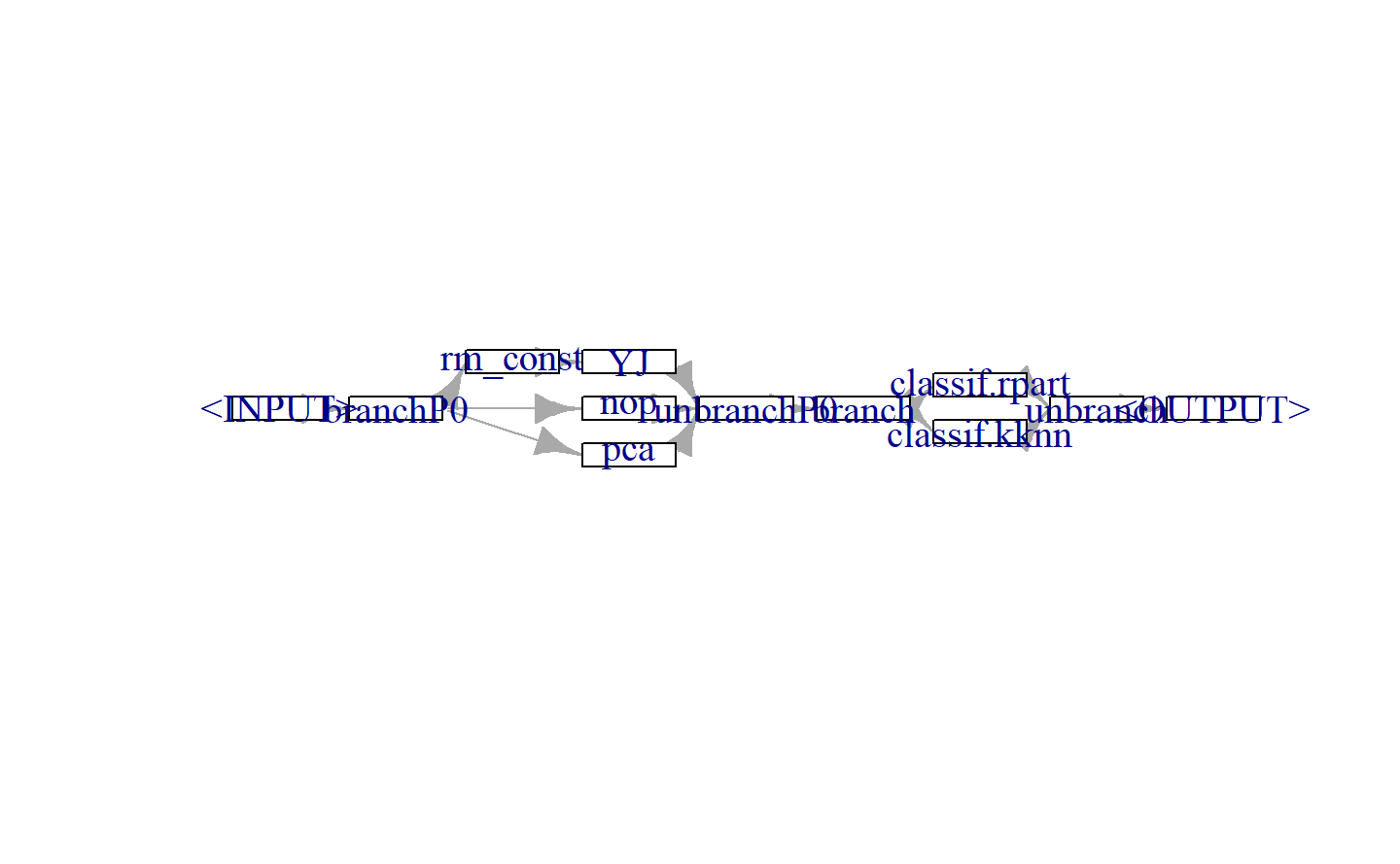

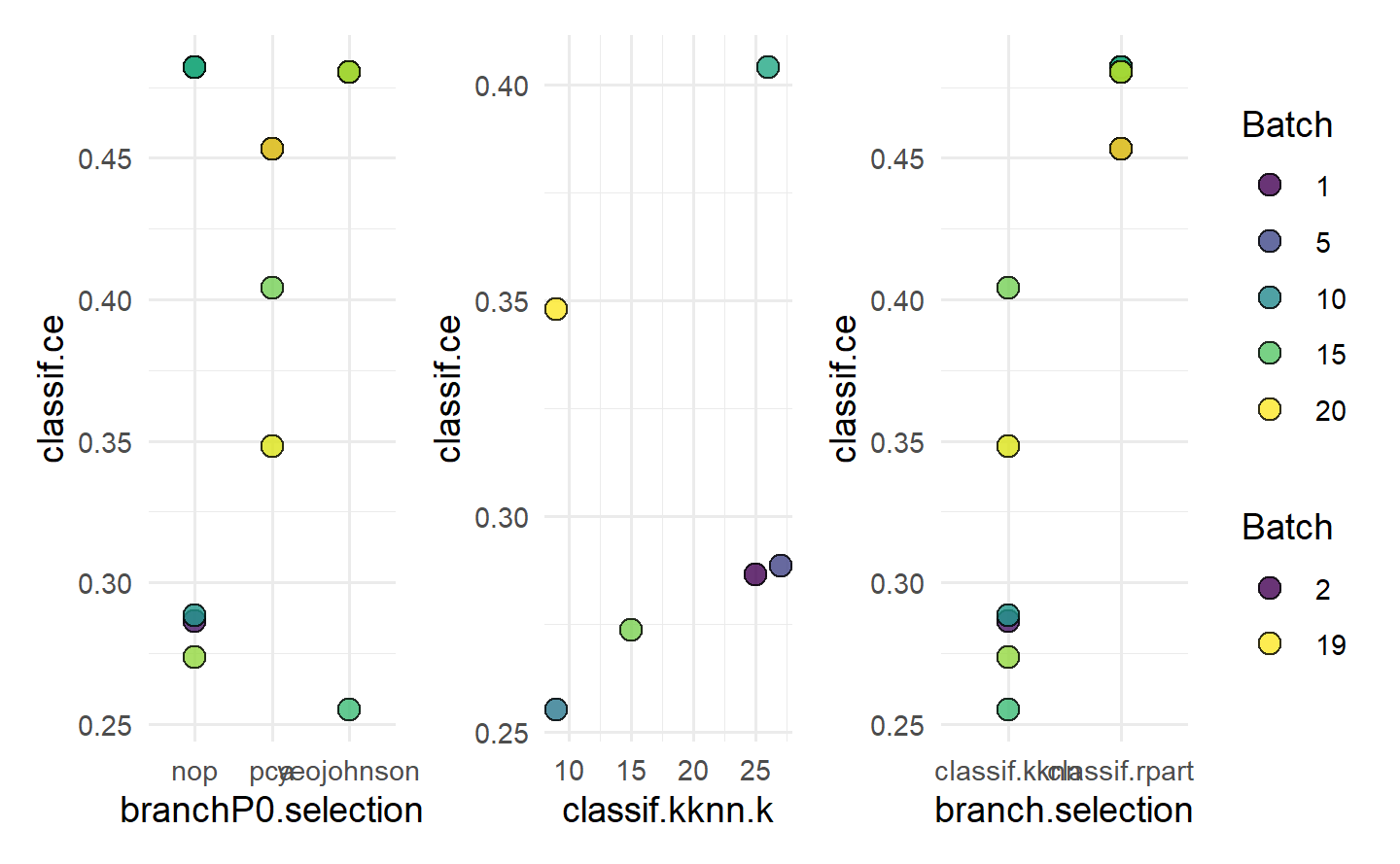

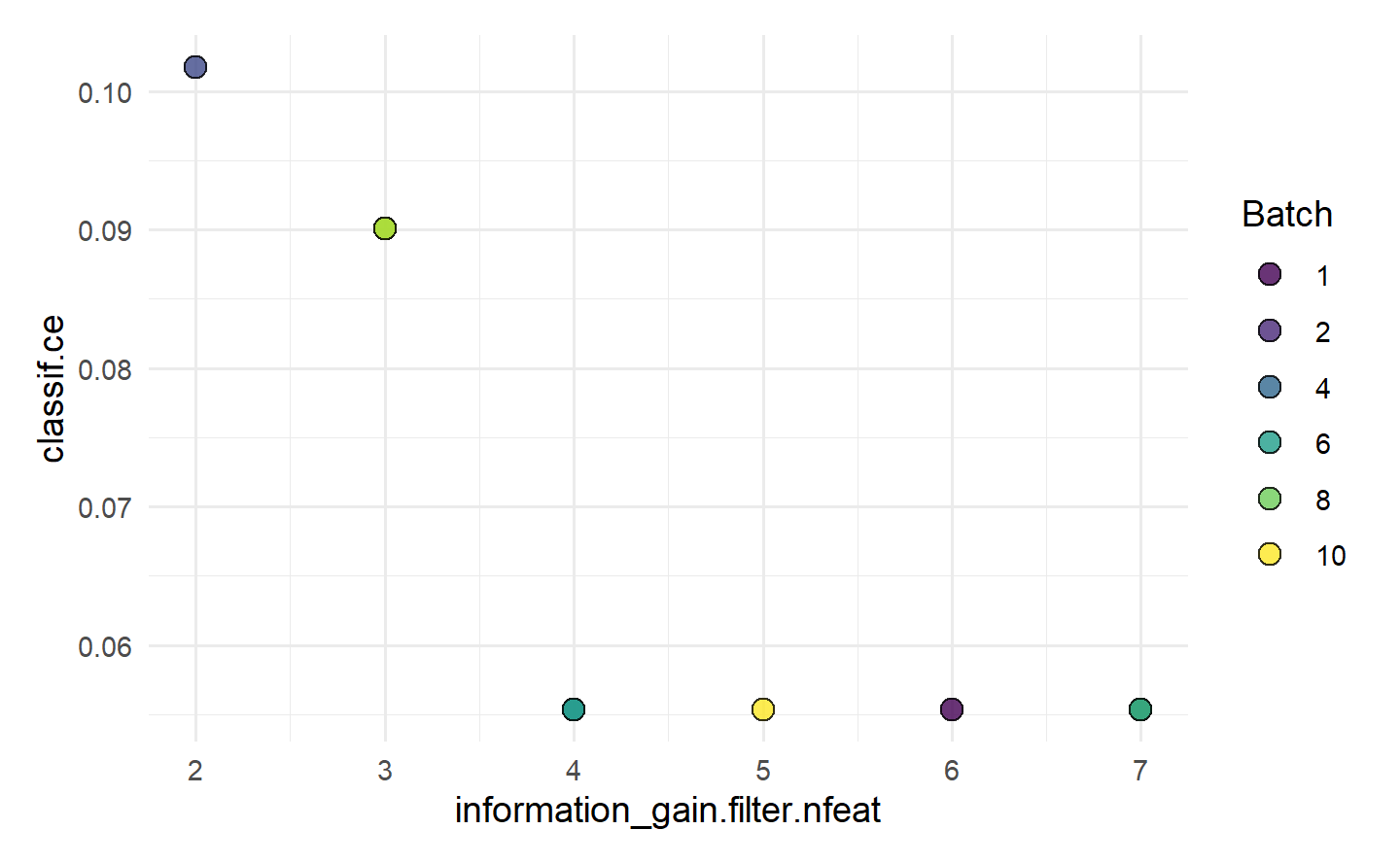

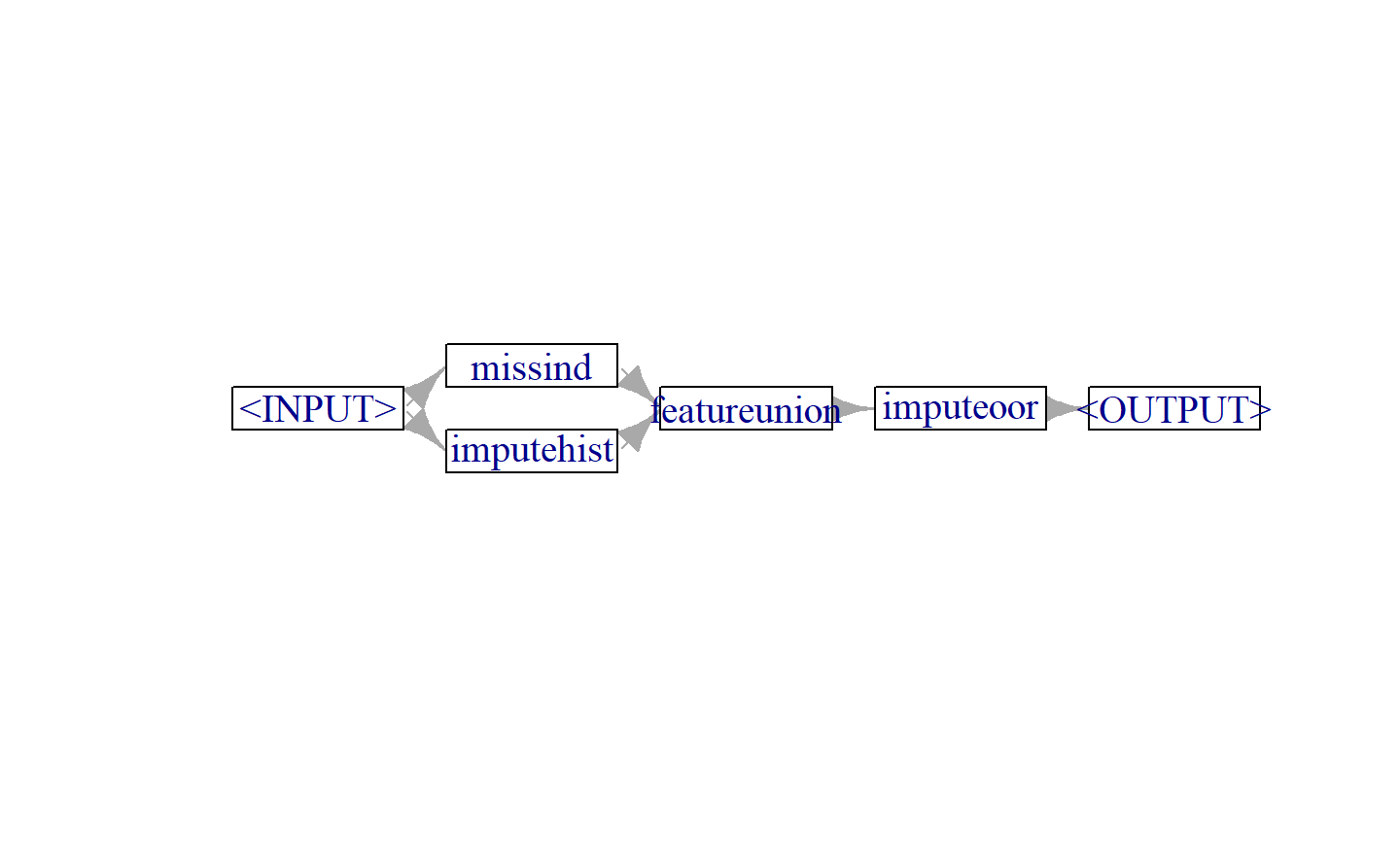

alpha = to_tune(1e-3, 1e3, logscale = TRUE),